- MCP servers standardize how AI agents access tools and data

- They improve scalability but increase cost, latency, and security exposure

- Success depends on architecture choice and strict access control

MCP servers are a popular topic in the AI space, and it’s easy to see why; they make it possible to build dynamic, powerful AI agents in record time.

While these servers bring great power to agentic systems, there are risks that I have not heard many people talk about during my 3-year career as an AI engineer and the founder of Aimec.

In this article, I’ll help you take a look at MCP servers in more detail, go over the risks and rewards, as well as give actual experiences I have had in the professional space when integrating with MCP servers.

What MCP is

Model Context Protocol (MCP) is an open protocol that standardises how applications provide context and tools to large language models, and is often described as “a USB‑C port for AI applications.”

Anthropic open-sourced MCP in late 2024, positioning it as a universal standard to connect AI assistants to where data lives (content repos, business tools, dev environments), reducing fragmented integrations.

The protocol is built on JSON-RPC 2.0 messages and formalises communication between:

– Hosts (AI applications that initiate connections),

– Clients (connectors inside the host, one per server connection),

– Servers (programs that provide context and capabilities).

The way I like to think about it is as a set of functions that are presented in a code structure that is easy for the AI to understand and use. When I start scoping the development for a client, I usually first look to see if there is an MCP server or a number of servers available that can help speed up the development of the solution. In addition to speeding up development, MCP servers make an AI agent so much more powerful and dynamic.

As a software developer, it often happens that a client will see the initial version or minimum viable product (MVP) of a solution, and then ask for additional features. This leads to another pricing discussion and then additional development that was not planned for. By integrating with MCP servers when developing a solution for a client, adding more functionality to the product can be as simple as just editing the prompt that is fed to the AI.

What an MCP server is

An MCP server is a program that exposes specific capabilities to AI applications through the protocol interface—examples include filesystem access, database querying, developer tooling integrations, team communication systems, and scheduling systems.

According to the official MCP documentation, there are three primary server “building blocks”:

– Tools: executable functions the model can invoke (often with user/developer approval gates).

– Resources: read-only, file-like data used as context (documents, schemas, API docs).

– Prompts: reusable instruction templates aimed at common workflows.

Developer note: While there are many MCP servers in the market, the official “servers” repo is explicit that many reference servers are educational examples, not automatically production-ready. As such, you must evaluate your own threat model and safeguards.

Protocol Mechanics in Real-World Systems

To implement MCP servers effectively in production environments, there are specific transport mechanisms, security protocols, and authorization flows that developers need to navigate. These dictate how the components interact within a system.

Core Transport Mechanisms

The architecture of an MCP integration begins with the choice of transport.

When using MCP servers, there are two primary mechanisms that cater to different deployment needs: STDIO and Streamable HTTP.

Process-Based Communication: STDIO

In many local or tightly integrated environments, STDIO is the default choice.

Under this model, the client launches the MCP server as a managed subprocess. Communication occurs via standard input and output streams, where messages are strictly newline-delimited.

A critical technical constraint of this transport, however, is that the server’s stdout must be reserved exclusively for protocol messages. Any extraneous logging or “print” statements from the server code can corrupt the message stream and break the connection.

Networked Communication: Streamable HTTP

For distributed systems where the server runs as an independent process, Streamable HTTP is used.

This transport supports standard POST and GET requests, allowing for more flexible deployment across containers or cloud environments. To handle long-running operations or asynchronous updates, it optionally utilizes Server-Sent Events (SSE), enabling the server to stream data back to the client over a persistent connection.

Hosting Models

MCP can be deployed across classic infrastructure models (on‑prem, cloud, hybrid) because the “server” is just a program and the protocol is transport-agnostic.

Below is a comparison of the different hosting options:

| Hosting Model | Typical MCP Deployment Shape | Strengths | Trade-offs and Risks | Best-fit Scenarios |

| On‑premises | Streamable HTTP MCP server inside your network boundary; optionally local STDIO for developer workstations | Strong control over data residency; low-latency to internal systems; integration with internal IAM and network security patterns | Requires internal ops maturity; patching and vulnerability management are your responsibility—MCP servers expose tool execution surfaces | Regulated data, internal tools, enterprise automation |

| Cloud | Streamable HTTP MCP server exposed over the Internet or private networking; often fronted by an API gateway | Fast provisioning, elastic scaling, managed observability stack; simplifies remote access from AI apps | Larger external attack surface; careful authentication, origins, and authorization required; data egress and cross-zone latency | Public-facing AI products; multi-region use; partner integrations |

| Hybrid | MCP servers close to data (on-prem) + model serving / orchestration in cloud, or vice versa | Keeps sensitive data local while using cloud scale for inference/training workloads (common enterprise pattern) | Integration complexity: identity federation, network routing, consistent audit logs; must avoid “confused deputy” patterns in proxy servers | Enterprises adopting AI iteratively; mixed data residency constraints |

A Practical Architecture Lens: “Local Tool Servers” vs “Remote Tool Services”

A useful way to think about MCP architectures is the transport boundary:

– Local STDIO servers are excellent for developer productivity and tight workstation integrations; they’re launched and managed by the client, and the process lifecycle is local.

– Remote Streamable HTTP servers are typically the choice for shared organisational capability services; they run independently and can serve multiple clients concurrently.

Key Benefits and Trade-Offs

Performance

As mentioned, MCP itself is light (JSON-RPC messaging), but overall system performance is dominated by:

– Network hops (local vs remote transport).

– The volume of tool schemas and tool outputs passed through the model context window.

On the second point, Anthropic has, however, documented that there is a scaling issue. According to the company, loading many tool definitions and passing large intermediate tool outputs through the model context can increase latency and cost, and can exceed context limits.

They propose “code execution with MCP” approaches where the agent loads tools on demand and processes data outside the model context for efficiency.

Developer Sidebar

I have seen the limitations first hand. For instance, when I tried to develop an agent for a client that plugged into the Google Analytics MCP server, I encountered issues. This was because I had to build the agentic system using only Ollama-hosted, local LLMs to ensure data privacy and not expose confidential client information to third-party AIs such as ChatGPT or Claude.

The client was hosting a 4 billion parameter model via Ollama. This was great for speed and budget. This setup also served the purpose at the time. However, there were issues when trying to integrate with the GA4 MCP server because of how much context the model would have to take in to use the tool. But, before we could even address this issue, I had noticed that the client was running a local LLM that did not have support for tools.

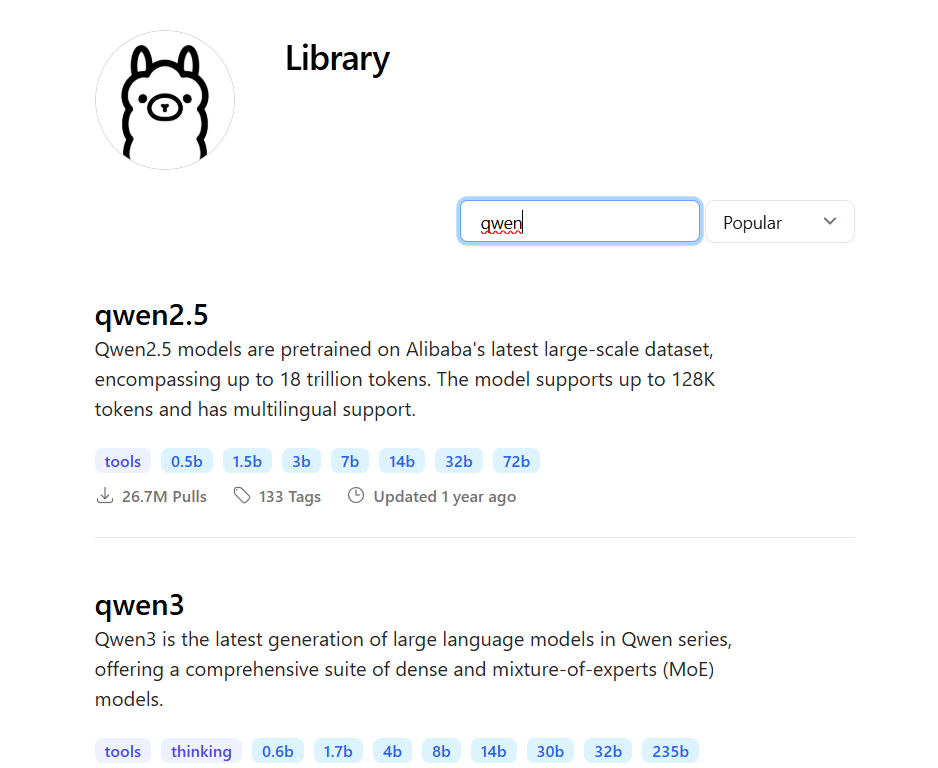

After sitting down with their decision makers, we reached the conclusion that the client would have to pull a different model via Ollama that was able to use tools. We settled for the Qwen family of models. It had different sized-models that we could try, and the client had agreed to invest into some additional infrastructure to host the model.

Qwen models available on Ollama (Source: Ollama’s website)

As you can see from the Ollama screenshot above, Qwen2.5 and Qwen3 are currently available in different sizes. I reached the conclusion, along with the rest of the client’s team, that any model with more than 30 billion parameters would overload the current infrastructure.

We tried the 30 billion parameter model first, and even this was too much for the infrastructure. It would take 5 minutes for the model to return a simple response. Eventually, we tried the 14 billion parameter model for Qwen2.5 and realized that it provided the best balance of accuracy, speed, and performance.

To further lighten the load, I then sat down with the team to get a better understanding of what their needs were. While my preferred approach is to make an agentic system as dynamic as possible through MCP integrations, this method was just not going to work given the limited resources.

After establishing what the team’s needs were, I identified key functions or aspects of the Google Analytics server that gave the AI agent the functionality that the client desired, and replicated this functionality with python functions. I then set these functions as tools for the agent, along with basic reporting and file management functionality. This would enable the agent to extract the relevant information from Google Analytics, and then be able to gather insights and generate reports with any structure before saving them locally, which is still very dynamic in my opinion.

The above is just an example of how MCP’s performance sometimes comes at a cost, and how clients are not necessarily able to access this power with their limited resources. This is especially true for clients that need to protect private information and who want to run their agentic systems locally.

Nevertheless, MCP servers still make AI agents perform better.

Is your AI infrastructure hitting a wall?

Moving from a prototype to a production-ready agent requires balancing context window limits with data security. Let’s audit your current stack and find the right model-to-infrastructure fit.

Scalability

In addition to performance, MCP is engineered to facilitate growth across two distinct dimensions: the breadth of the ecosystem and the technical capacity of the infrastructure.

By decoupling the data source from the specific AI interface, MCP allows for highly efficient scaling strategies that address both developer productivity and system performance.

The primary driver behind MCP is the concept of universal interoperability, often referred to as integration scale. The “implement once, plug into many” philosophy ensures that developers do not need to build custom connectors for every new AI model or chat interface.

Instead, a single MCP server acts as a standardized gateway, allowing a data source to be immediately compatible with any host or client that supports the protocol. This significantly reduces engineering overhead and ensures that tools remain portable across a rapidly evolving AI landscape.

In terms of runtime scale, MCP provides the flexibility needed for high-demand environments. Remote Streamable HTTP servers can be deployed like any other modern stateless service, allowing them to be horizontally scaled behind load balancers to manage heavy traffic. To handle more complex interactions, the protocol maintains necessary state through explicit session mechanisms, ensuring that servers can scale out across a fleet of instances while still providing a coherent, persistent experience for the user.

Cost

When evaluating the Model Context Protocol, it is helpful to categorize costs into two distinct buckets: infrastructure and model interaction.

Infrastructure costs encompass the standard operational overhead of running servers, including compute power, network bandwidth, storage, and the general maintenance required for either on-premises or cloud deployments. These costs are typically predictable and scale linearly with the physical resources allocated to the MCP servers.

In contrast, model interaction costs often emerge as the more significant and surprising expense for development teams. This bucket includes the tokens consumed by tool schemas and tool results, as well as the orchestration patterns required to manage a large ecosystem of tools. Because every tool definition adds to the input context, a massive library of available functions can quickly bloat token usage even before the model performs a task.

To manage those expenses, efficient teams prioritize strategies that minimize the model’s active context window. This includes deferring the loading of specific tools until they are strictly necessary, caching previous tool results to prevent redundant calls, and performing intermediate data processing outside the context window. By offloading these tasks, teams can maintain a high-performance system while keeping the “surprise” costs of model interaction under control.

Security

MCP acts as a double-edged sword for system security.

While it can serve as a robust governance layer by standardizing how models interact with data, it simultaneously expands the attack surface by creating explicit bridges between AI models and sensitive internal tools.

Because the connections provide models with the power to execute actions or retrieve information, the protocol’s security hinges on how strictly the transport and access layers are managed.

For networked deployments using HTTP, the primary defense involves rigorous origin validation and authentication. These measures are critical to preventing DNS rebinding and similar cross-site attacks that target local or internal services.

While the protocol allows for optional authorization, it is strongly recommended for any server exposing administrative operations or sensitive datasets. The official documentation specifically points toward OAuth-based patterns as the standard for securing these remote server interactions.

Beyond basic connectivity, MCP implementations must account for complex threats like Server-Side Request Forgery (SSRF) and “confused deputy” problems, where a server is tricked into using its elevated permissions to perform unauthorized actions on behalf of a user.

Ultimately, it is vital to remember that MCP servers are standard software components and are susceptible to traditional vulnerabilities such as path traversal, argument injection, and simple misconfiguration.

Practical examples from the ecosystem, such as early security advisories for the mcp-server-git implementation, highlight how insufficient validation can allow a model to inadvertently access files or directories outside its intended scope.

Treating MCP servers with the same security rigor as any other production-grade backend service is essential for maintaining system integrity.

Developer Sidebar

Before continuing, I have to bring up the Google Analytics MCP scenario that I mentioned earlier.

One approach that I could have taken with the entire system was to just connect the Google Analytics MCP to a third-party agent. These LLMs have far larger context windows than the models that can be hosted locally on limited resources.

I had initially proposed that approach to the client, but a member of their team expressed valid reasons for the concerns. See, we were not using any functionality from the MCP server that had to do with customer revenues and other information a company would not want on the web. The functionality had more to do with traffic data and rankings.

However, the concern was that the third-party AI would hallucinate and decide that for some reason it would need to call the MCP functionality that exposes the information we would want to keep private.

In the AI and information age, companies like OpenAI and others are constantly training their models. If the confidential information had been extracted by the LLM due to an hallucination, or any other reason, the client feared that the private information would be stored in the LLM’s memory and potentially exposed, which could lead to compliance issues for the company.

As such, we opted to mitigate the risk as much as we could and just host the system on-prem.

Manageability

From an operational perspective, the Model Context Protocol significantly improves system manageability by bringing the discipline of traditional services engineering to the AI space.

By standardizing tool and resource schemas, it creates a uniform language for capability discovery. This allows administrators to see exactly what an AI model can do within a system at any given time, replacing the “black box” nature of bespoke integrations with a transparent, structured inventory of functions and data access points.

Manageability is further enhanced through the use of explicit operational boundaries, such as the definition of “roots” to limit a model’s scope within a filesystem. However, it is a critical management principle that these protocol-level settings are treated as coordination mechanisms rather than absolute security perimeters. Operational teams must still rely on mandatory OS-level permissions and sandboxing to ensure that the AI cannot exceed its intended environment, maintaining a “defense-in-depth” approach.

Perhaps the greatest operational benefit of MCP is that it allows for the use of reusable deployment patterns. Because MCP servers function as standard networked services, they can be managed using existing enterprise tools like containers, API gateways, and centralized policy enforcement engines.

That shift moves AI integration away from experimental, one-off setups and into the realm of professional DevOps, where reliability, monitoring, and scaling are handled through proven, industry-standard workflows.

Decide Where MCP Fits in Your AI System

Successfully deploying MCP-based systems in your environment requires defining three critical boundaries at the outset to ensure the system is both functional and secure.

First, you must determine which actions will be exposed as MCP tools, specifically distinguishing between read-only operations and those that can modify data. This classification dictates whether an action requires explicit human approval or can be safely auto-approved by the system.

Second, you must decide where these tools will execute, choosing between local execution via the STDIO transport for tightly coupled environments or remote execution via Streamable HTTP for distributed, cloud-based architectures.

Finally, you must establish an authentication and authorization strategy, selecting from interactive OAuth flows for users, machine-to-machine credentials for automated services, or existing enterprise-managed identity patterns.

For teams working within the Python ecosystem, the official MCP Python SDK provides a robust foundation for those implementations.

It explicitly supports building servers that expose resources, prompts, and tools, while offering native support for various transports including STDIO, SSE, and Streamable HTTP. When authoring these servers, it is essential to focus on these core capabilities while remaining vigilant about technical pitfalls.

While the official MCP servers repository and registry offer a wealth of reference implementations for various tools and services, they should be approached with professional caution.

Those examples are excellent for understanding how to structure an integration, but they should be treated primarily as educational resources. Before moving them into a production environment, you should independently harden the code to meet your specific security and performance requirements.

Developer Sidebar

The decision on what MCP-based systems need to be read-only is an important one.

When working on a vector database system that had context on code bases for a client, a major concern that was flagged was what would happen if the AI, while analyzing a code base, had pushed changes to the repository in the cloud, which would then lead to the changes going live without anyone in the team knowing before it is too late.

To address those concerns, we decided that the system should only be able to download, read, and make suggestions on the code repo. It could give code suggestions, but should not actually make changes to the code.

With that in mind, we quickly envisioned what could go wrong if we just integrated the LLM with the Bitbucket MCP server, which was the initial approach we had in mind. Imagine, at the middle of the night the agent completes an overnight analysis of a code base, makes changes, and pushes them to production, only for the team to find out in the morning after that the changes went live and the entire website broke. Or even worse, imagine the AI agent just decides to start from scratch, and deletes the entire repo in the process.

Of course, those are doomsday scenarios. Professional environments have code review systems and pipelines in place. With the rise of AI, however, these doomsday scenarios now have a higher probability of happening.

Long story short, we went with the approach mentioned earlier of determining what functionality the client wants, hardcoding python functions that do the job, and then setting these functions as tools for the AI agent.

Integrating MCP Servers Into AI/Agent Frameworks

The flexibility of the Model Context Protocol allows it to integrate seamlessly with leading AI frameworks and specialized model-serving infrastructure.

By using MCP as a standardized interface, developers can bridge the gap between high-level orchestration libraries and low-level machine learning workflows.

Build Agents That Don’t Just Talk—They Execute.

MCP is the ‘USB-C for AI,’ but building the right port requires an architect, not just a developer. At Aimec, we build autonomous systems that respect your data boundaries and scale with your business.

Industry Integration Approaches

Two primary ecosystems have emerged as the dominant ways to implement MCP at scale. In the OpenAI ecosystem, remote MCP servers are treated as “tools” within the Responses API.

This setup is particularly powerful because it allows for granular control; tool calls can be configured to require manual human approval, ensuring a safety check before the model executes a command.

Alternatively, LangChain users can leverage the protocol through a dedicated MCP adapter library. This allows LangChain agents to interact with tools defined on any MCP server across multiple transports. This approach is ideal for developers who already have complex agentic workflows and want to add standardized data access without rewriting their existing logic.

Frequently Asked Questions

How does MCP differ from a standard API integration?

Standard API integrations are usually “bespoke,” meaning you have to write specific code to tell an AI how to use a specific endpoint (like a custom wrapper for every tool). MCP acts as a universal translator. Once a data source or service is built as an MCP server, it can “plug and play” into any AI host (like Claude Desktop or a custom agent) without rewriting the integration logic. It’s the difference between hardwiring a device and using a USB-C port.

Can I use MCP servers with local LLMs to ensure data privacy?

Yes, but with caveats. While MCP is model-agnostic, the LLM you choose must support Tool Use (Function Calling). As noted in the case study within the article, smaller local models (under 7B parameters) often struggle with the complex context required to execute MCP tools accurately. For a balance of privacy and performance, we recommend models like Qwen2.5-14B or Llama 3.1-8B hosted via Ollama, provided your infrastructure can handle the latency.

What are the primary security risks of deploying MCP in a corporate network?

The main risk is the “Confused Deputy” problem, where an AI agent is tricked into using its elevated permissions to perform an unauthorized action (e.g., deleting a database instead of just querying it). Because MCP servers can have broad “tool” access, you must implement Human-in-the-Loop (HITL) approvals for any “write” operations and use OS-level sandboxing to ensure the server cannot access files outside its intended scope.

Does adding more MCP servers slow down the AI’s response time?

Yes. Every MCP server you connect adds “weight” to the model’s context window. The AI has to “read” the definitions and capabilities of every available tool before it can decide which one to use. This increases token consumption and latency. To mitigate this, professional deployments should use “on-demand” tool loading or, in resource-constrained environments, replace heavy MCP servers with streamlined, hardcoded Python functions for specific tasks.

Is MCP a replacement for frameworks like LangChain or CrewAI?

No, it is a complement. Frameworks like LangChain and CrewAI handle the orchestration (the “brain” and the “teamwork”), while MCP handles the connection (the “hands” and the “access”). You can use an MCP adapter within a LangChain agent, allowing your complex autonomous workflows to interact with any standardized MCP data source seamlessly.