In highly regulated and technical industries, generic AI models often fall short of business needs.

A growing solution is custom LLM development for specialized industries – in other words, building Domain-Specific Language Models (DSLMs) tailored to your field. Unlike one-size-fits-all tools (like ChatGPT or other general LLMs), DSLMs are trained on industry-specific data and terminology, delivering expert-level accuracy and compliance.

This article explores why specialized intelligence is crucial for sectors like law, finance, and healthcare, and how DSLMs provide the precision, reliability, and governance these fields demand.

Modern enterprises in law, finance, or medicine face high stakes where a single AI mistake can mean regulatory penalties or patient harm. Generic AI might sound fluent, but fluency isn’t enough when accuracy, compliance, and context are non-negotiable. That’s where DSLMs come in. By focusing on a narrow domain, a DSLM understands your industry’s jargon and rules deeply. The result is an AI assistant that doesn’t just chat – it performs like a specialist, with far fewer errors and much greater trustworthiness for critical tasks. Let’s delve into how DSLMs overcome the limitations of generic models and why your business may need one.

The Limitations of Generic LLMs: Addressing Hallucinations, Lack of Industry Terminology, and Regulatory Risks

General-purpose LLMs like GPT-4 or Claude are impressive in open-ended tasks, but they have serious limitations in specialized domains. When accuracy and accountability are paramount, these broad models can become liabilities. Below are some of the main issues.

Hallucinations

Large LLMs are prone to generating information that sounds plausible but is incorrect or irrelevant.

In everyday use this might be a minor nuisance, but in regulated industries it’s unacceptable. A 2023 study found advanced models like GPT-3 and GPT-4 “hallucinate” 12–20% of the time on complex financial tasks.

Imagine a banking chatbot inventing a false compliance rule, or a medical assistant misidentifying a symptom – the consequences could be severe. In fact, hallucinated outputs in finance have led to incorrect risk reports, potentially causing regulatory violations or financial losses.

Regulated sectors can’t afford that level of uncertainty. Many firms are now abandoning fully general models for more reliable, domain-scoped alternatives.

Lack of Industry Knowledge & Terminology

Generic models learn from the broad internet and may not grasp the nuances of niche terminology or processes in your field.

They might misunderstand a legal term of art or a medical abbreviation, leading to inaccuracies. General LLMs aren’t trained deeply enough on specialized, validated sources, so they can misinterpret regulations or output outdated/wrong advice in domains like medicine, finance, or law.

By contrast, DSLMs are steeped in the lingo and lore of their target domain. For example, a legal DSLM will know the difference between an indemnity provision and an indemnity clause, or the specific meaning of “consideration” in a contract.

General models often struggle with such subtleties, which can lead to errors or misinterpretations when applied to specialized tasks. In short, a generic AI might sound confident discussing your industry, but sounding right is not the same as being right. High-stakes industries reward precision, not creative guesswork, and generic AI is not built for that level of exactitude.

Regulatory and Compliance Risks

Out-of-the-box LLMs were not designed with specific regulations in mind. They learn from uncurated public data, which means they have no inherent understanding of laws or compliance standards for healthcare, finance, legal, etc. This poses a huge risk in regulated sectors.

Without careful tuning, a generic AI might inadvertently reveal personal health information (violating HIPAA), ignore financial reporting rules, or omit required legal disclaimers. These models lack built-in compliance frameworks, making it difficult for enterprises to ensure AI outputs meet industry rules.

Even adding external filters or guardrails is often insufficient – the model can still produce unpredictable, non-compliant answers.

For example, if asked about a patient case, a vanilla LLM might casually include identifying details, breaching privacy mandates. Or it might give investment advice that conflicts with fiduciary regulations.

Such compliance failures are a top reason AI projects fail in regulated industries. The unpredictability of a general model is simply too high without domain-specific constraints. In contrast, as we’ll see, DSLMs bake in the necessary industry rules and guardrails from the start.

Data Security Concerns

General AI services are typically cloud-based, meaning your prompts and data leave your controlled environment. For sensitive data – client contracts, financial transactions, patient records – sending it to a third-party API is often a non-starter for security and data sovereignty.

Many organizations are (rightly) uncomfortable uploading confidential documents to an external server just to get an AI response. Beyond privacy, there’s also the risk that your data could inadvertently be used to further train the public model, unless explicit guarantees are in place. Generic LLM providers may retain query data (even if anonymized), which raises compliance red flags in finance and healthcare.

In short, traditional LLMs can’t guarantee that what happens in your company, stays in your company. This is a fundamental limitation when trust and confidentiality are mandatory.

The above issues explain why general AI falls short for specialized, high-stakes use cases. When the cost of an error is measured in lawsuits or lives, businesses can’t rely on a bot that might be right. They need an AI that is consistently accurate and accountable within a strict domain context. This is precisely the gap that Domain-Specific Language Models are designed to fill.

DSLM Architecture and Training: How Fine-Tuning on Industry Data Creates Superior Relevance and Accuracy

How do we get an AI that behaves like a seasoned professional rather than a precocious generalist?

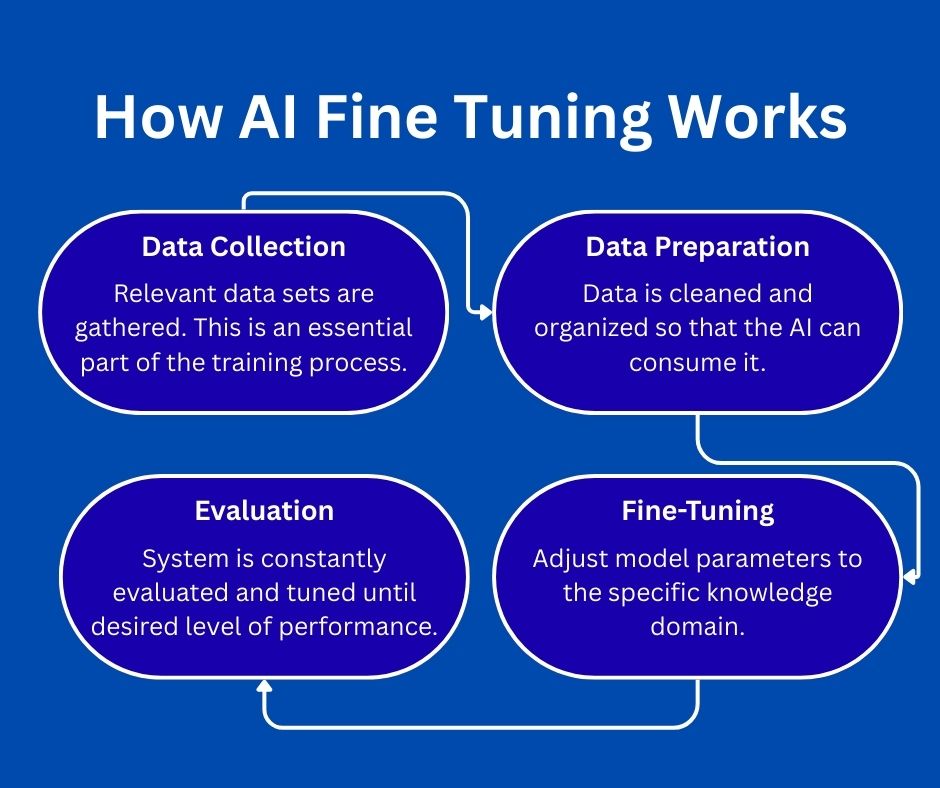

The answer lies in how DSLMs are built and trained. A DSLM typically starts from a powerful general model “brain,” but then is fine-tuned on curated, domain-specific datasets relevant to your industry.

By honing the model on what matters to you (and filtering out what doesn’t), we end up with an expert system that provides superior relevance and accuracy for your use cases.

Fine-Tuning with Domain Data

Fine-tuning means taking a pre-trained base (like GPT) and continuing its training on a targeted dataset.

For a DSLM, that dataset might be financial transaction records and AML guidelines (finance), case law and contracts (legal), or medical textbooks and trial results (healthcare). The model gradually adjusts its billions of parameters to better predict text in the context of that niche content.

Essentially, the AI “studies” the domain literature and learns the language, facts, and logic of the field. It’s the difference between a well-read layperson and a board-certified specialist – both speak English, but the specialist has a depth of knowledge and vocabulary the layperson lacks.

Crucially, the data used for fine-tuning is high-quality and vetted. This dramatically improves factual accuracy. A DSLM isn’t just guessing based on internet trivia; it’s relying on vetted domain knowledge, which greatly reduces hallucinations and incorrect answers.

The model learns to rely on the same authoritative sources your experts trust. For example, a medical DSLM would learn from actual clinical guidelines and journals, so its suggestions align with current medical consensus – reducing the chance of an unsafe recommendation. In a sense, fine-tuning grounds the model in reality: it won’t fabricate as readily because it “knows” the boundaries of truth in that domain.

Domain-Specific Vocabulary and Context

An immediate benefit of DSLM training is mastery of jargon and context. Every industry has unique acronyms, terms of art, and styles of communication.

General models often stumble here, but a DSLM learns the lingo.

For instance, a generic model might not reliably distinguish a “security” (financial instrument) from “security” (safety) based on context, or it might not recognize a term like “KYC” (Know Your Customer) which is obvious to any banker.

After domain training, the model understands those nuances. By being rich in industry-specific terminology and knowledge, DSLMs can interpret queries and documents the way a domain expert would. This higher contextual awareness directly translates to fewer errors and more relevant answers in practice.

Architectural Right-Sizing (Efficiency and Speed)

Interestingly, DSLMs often don’t need to be ginormous. By focusing on a narrower scope, they can achieve great results with fewer parameters than a general LLM. This makes them smaller, faster, and cheaper to run.

Why pay for a 175-billion parameter behemoth when a 6-billion parameter model fine-tuned on your tasks might be just as accurate for your needs? Many DSLMs leverage efficient adaptation techniques (like LoRA or low-rank fine-tuning) to avoid retraining the whole model. The upshot is lower computational cost and latency for inference.

In fields like finance where milliseconds matter, or in healthcare where every minute saved in diagnosis counts, this efficiency is critical.

Built-In Domain Knowledge and Rules

Perhaps the biggest advantage is that DSLMs can have compliance and domain rules baked into their very architecture. During training, the model doesn’t just learn facts – it learns patterns of reasoning and constraints specific to the domain.

For example, a financial DSLM can be trained on proper regulatory filings and audit checklists, so it internalizes the need to, say, flag transactions over a certain size or follow SEC disclosure rules.

A healthcare DSLM can be imbued with HIPAA-aware behavior and medical ethics, reducing the chance it outputs something that violates patient privacy or safety guidelines. In effect, the model learns the “logic of the domain” in addition to language. This is very hard to retrofit into a generic model via prompts alone. It’s much more effective to train the AI from the ground up with those guardrails.

To illustrate, consider some real-world DSLM examples. Researchers have developed specialized models like BioBERT (biomedical text), FinBERT (financial reports), and LegalBERT (legal documents) by fine-tuning on domain corpora. These models significantly outperform general LLMs on tasks like clinical named entity recognition, financial sentiment analysis, and case law classification, respectively – all thanks to their focused training.

Even tech giants are onboard: Google’s Med-PaLM 2 model (fine-tuned for medicine) recently achieved about 86.5% accuracy on USMLE medical exam questions, surpassing the original Med-PaLM by 19%. In head-to-head comparisons, doctors even preferred Med-PaLM 2’s answers over other physicians’ answers in many cases. Such gains underscore that fine-tuning on expert data doesn’t just incrementally improve performance – it can push AI into expert-level territory. When done right, a DSLM can approach or even exceed human specialist accuracy on narrowly defined tasks.

In summary, DSLMs owe their superior accuracy to a simple formula: start with a general intelligence, then raise it in a domain like you would an apprentice. The fine-tuning process equips the model with deep domain understanding, relevant facts, and compliance constraints. The architecture is optimized to that purpose – often leaner and more efficient, yet smarter where it counts. The end product is an AI that speaks your industry’s language and thinks in its terms. Next, let’s see how these qualities translate into tangible benefits via real use cases in high-stakes verticals.

Real-World Example

With one solution that we developed, we made digital twins of people based off of their CVs and other information about them. These digital twins could answer career and other questions related to each person that the digital twin represented online.

The system had guardrails in place so that the AI never invented facts. For example, the twin couldn’t say that a person could do something or had certain work experience if it was not explicitly stated in the CV or other information.

While that is not a fully specialized intelligence system, it followed the same principle of relying on certain information as a knowledge domain and not hallucinating.

Use Cases in High-Stakes Verticals

How exactly are organizations applying DSLMs in the wild?

Let’s explore a few high-stakes verticals – legal, finance, and pharmaceuticals/healthcare – where domain-specific AI is making a difference. In each of these areas, the cost of error is high, and the value of tailored AI is correspondingly great.

From automating contract reviews to catching money launderers or improving diagnostic accuracy, DSLMs are unlocking new efficiencies and insights.

Legal: Automated Transaction Review and Indemnification Provision Analysis

Legal work is drowning in documents. Consider a corporate merger or a large commercial contract review: attorneys must sift through hundreds of pages, pinpoint key clauses (indemnities, liabilities, etc.), and ensure nothing is missed – all under tight deadlines. This is exactly where a legal DSLM shines.

AI-powered transaction review systems, driven by specialized legal models, can analyze mountains of contracts to extract crucial information and flag risks in a fraction of the time it takes humans.

For instance, modern legal AI can understand legal language contextually and identify key provisions automatically. A domain-tuned model will comb through an agreement and pull out clauses on indemnification, limitation of liability, change of control, termination rights, and more – the very clauses lawyers pore over during due diligence.

Instead of manually hunting for every indemnity clause, the AI highlights each one for the lawyer to examine, and even notes if any are non-standard or particularly risky. This dramatically reduces the chance of overlooking a single critical clause that could cost millions later. It also frees up attorneys’ time to focus on analyzing implications rather than locating text.

Take indemnification provisions as a concrete example. These clauses transfer risk between parties. They’re often buried deep in contracts. A legal DSLM-based tool can not only find all instances of indemnification clauses but also compare them against templates or benchmarks. It might flag if an indemnity is unusually broad or if it’s one-sided in a way that’s unfavorable to your client.

Essentially, the AI does a first-pass issue spotting with consistent accuracy, something human reviewers, no matter how careful, might slip up on when fatigued by volumes of text.

Moreover, because the models can integrate compliance frameworks, a legal DSLM can be aware of jurisdiction-specific rules. For example, if reviewing a contract involving personal data, the AI could automatically check for GDPR-compliant data protection clauses. If it’s analyzing an employment agreement, it might verify that non-compete clauses comply with local labor laws. A general LLM wouldn’t know to do this without extensive prompts, but a DSLM can be pre-trained on such regulatory requirements.

The result is a powerful assistive tool: faster contract reviews, fewer missed risks, and more standardized analysis. Senior partners get peace of mind that the AI second pair of eyes hasn’t overlooked something a tired junior might. And instead of spending 80% of their time on rote review, lawyers can spend more time on high-level interpretation, negotiation strategy, and advising clients. In an industry where time is literally money (think billable hours) and risk management is king, DSLMs are poised to become indispensable.

Finance: Real-Time AML and Fraud Detection

Money moves fast, and so do bad actors. Financial institutions are under intense pressure to detect fraud and money laundering as it happens, not weeks later. Traditional rule-based systems flag some suspicious transactions, but they throw off many false positives and also miss sophisticated schemes. Enter DSLMs in finance: these models are trained on the language of transactions, regulations, and financial crime patterns. They can serve as intelligent sentinels, monitoring streams of data and flagging truly anomalous or high-risk activity in real time.

A financial DSLM can ingest transaction descriptions, customer profiles, historical patterns, and even unstructured data like news or emails. Because it understands finance-specific language, it can catch things a generic model might miss – for example, recognizing that a series of transfers fits a known layering pattern in money laundering, or that a customer’s profile info doesn’t match their transaction behavior (a red flag). Anti-Money Laundering (AML) efforts benefit especially from AI that knows the difference between a benign large transfer versus a structuring attempt to evade reporting thresholds.

LLM technology can significantly enhance compliance workflows. By automating routine checks like AML alerts and fraud screening, banks can improve both efficiency and effectiveness of their oversight.

In practice, a domain-specific model might cross-verify each flagged transaction against contextual data (geolocation, customer history, sanctions lists, etc.) and provide a risk score with an explanation.

Real-time fraud detection is another area where DSLMs offer a leap forward. Advanced systems now combine large language models with real-time data feeds to monitor activities like credit card transactions or account logins as they occur. Because these models can understand patterns and context, they can spot complex fraud schemes that involve, say, coordinated actions across multiple accounts or subtle deviations from a customer’s typical behavior.

For example, an AI might flag that multiple low-value transactions from different senders are hitting an account right after a big deposit – a potential smurfing technique in money laundering. Or it might detect that a user’s spending pattern this week is highly inconsistent with their usual habits, indicating a possible account takeover fraud. By analyzing a broad range of features simultaneously (something transformers excel at), LLM-based detectors can catch fraud that rule-based systems overlook. And they do it fast – often in milliseconds – so the system can block a transaction before it completes, preventing loss in real time.

Several financial institutions are exploring custom models fine-tuned for financial data and tasks. These models can be trained on years of transaction data, AML case reports, and regulatory guidance. The payoff is an AI that not only flags suspicious activity but also generates a rationale like a human analyst: Flagging due to large round-number transfers to multiple new recipients, pattern consistent with layering stage of money laundering.

That level of insight, produced instantly, helps compliance officers prioritize the truly risky cases among thousands of daily alerts.

Beyond AML, a finance DSLM can assist in areas like algorithmic trading compliance, real-time market news analysis, or customer service (with accurate, regulation-compliant answers). But the core theme is precision and speed. Money-launderers and fraudsters innovate constantly; a static system won’t keep up.

A DSLM, however, can be updated with new typologies and adapt, all while respecting the strict privacy and audit requirements of finance. For the CFOs and compliance officers, this means fewer fines, less fraud loss, and a more secure financial ecosystem, which ultimately builds customer trust. It’s not an exaggeration to say that for banks and fintechs, adopting domain-specific AI for AML could be the difference between catching the next scandal in time or being the next headline.

Pharma & Healthcare: Accuracy in Medical Diagnostics and Technical Documentation

In pharmaceutical and healthcare settings, accuracy isn’t just important – it’s literally life-saving. Whether it’s diagnosing a condition or ensuring a manufacturing protocol is followed to the letter, mistakes can cost lives and trigger regulatory nightmares. DSLMs are proving immensely valuable in these environments by boosting the accuracy of medical AI and the reliability of technical documentation processes.

Consider medical diagnostics and decision support. Today’s top medical LLMs (like Google’s Med-PaLM 2) have shown they can reach near-expert performance on medical exam questions. A domain-tuned model can parse a patient’s symptoms and medical history and suggest likely diagnoses or next steps, often matching the insights of experienced physicians.

In fact, Med-PaLM 2 was the first to exceed the passing score on U.S. Medical Licensing Exam-style questions, and physicians in studies preferred its answers on many clinical metrics. This is huge – it means a properly trained medical DSLM can serve as a dependable “second opinion” or triage assistant. For example, if a patient presents a rare combination of symptoms, a general AI might get confused or hallucinate a diagnosis. A medical DSLM, however, likely studied thousands of similar cases and knows the pertinent details. It can recall, say, that Disease X is rare but often misdiagnosed as Disease Y, and recommend specific tests to distinguish them.

Now, accuracy in healthcare isn’t only about diagnostics – it’s also about clear, error-free medical documentation. The pharmaceutical industry in particular is documentation-heavy: Standard Operating Procedures (SOPs), batch manufacturing records, quality control reports, regulatory filings, the list goes on. These documents are lengthy and technical, and a minor error (like a wrong unit conversion or an omitted step) can halt production or spark regulatory action. Generic AIs don’t handle this well because they don’t understand a pharma company’s proprietary documentation and the specific protocols behind the firewall.

A DSLM trained on a pharma company’s own data, however, knows everything about the company’s operations – it grasps the validated processes and terminology that a public model never saw. This makes it incredibly useful for tasks like document QC, summarization, and compliance checks. For instance, an NLP system built on a pharma DSLM can ingest a 200-page batch record and highlight any deviations or anomalies (maybe a temperature reading out of range, or a missing sign-off). It can ensure that a drug label document matches the approved template exactly, down to every last contraindication and dosage instruction.

Margin for error in these tasks is zero. In Pharma, ‘close enough’ based on generic knowledge isn’t acceptable – the AI system must understand the specific processes that regulators audit. We already see prototypes of this: one FDA-related project created a localized model for drug label documents which achieved 95% accuracy in drug name recognition (a notoriously tricky aspect) by working within a secure, domain-specific framework.

Another example is using a DSLM to cross-check clinical trial data for inconsistencies or to ensure patient summaries correctly reflect the source data. By having the AI double-check tedious details, human researchers and clinicians can focus more on interpretation and decision-making.

Furthermore, a healthcare DSLM inherently respects patient privacy and ethical considerations if trained properly. It can be designed never to divulge personal identifiers and to follow guidelines for clinical decision support tools. Generic models can’t guarantee that (they might, for example, generate an answer that includes someone’s name from training data by accident).

A DSLM for healthcare, however, can be trained exclusively on de-identified data or within a hospital’s secure environment, ensuring compliance with privacy laws like HIPAA. It’s not just accuracy of content, but also accuracy in process – making sure the right data is used and protected in the right way.

From a patient’s perspective, these advancements mean more accurate diagnoses, fewer unnecessary tests, and more personalized care. For pharma companies, it means faster time-to-market for drugs (since documentation and compliance checks speed up), and fewer costly mistakes in production. And for healthcare executives and hospital admins, it means AI solutions that can actually be trusted in practice because they’ve been purpose-built for the medical context.

Data Sovereignty and Compliance: Protecting Sensitive Data through Private Cloud Deployments and Encryption

For many organizations, a major barrier to AI adoption isn’t capability – it’s trust and control over data. Banks, law firms, and hospitals deal with extremely sensitive information, and they simply cannot let that data roam free on unknown servers. This is why data sovereignty and compliance considerations loom large, and why DSLMs (often deployed in private or hybrid environments) offer a compelling solution.

Keeping Data in Safe Hands (and Places)

With a domain-specific model, you typically have the option to deploy it in a private cloud, on-premises data center, or a secure virtual private cloud (VPC) with your cloud provider. Unlike public AI APIs, a privately deployed DSLM ensures that your data never leaves your controlled environment. All processing – from training to inference – can happen on infrastructure that you manage or explicitly trust. The benefit is twofold: you eliminate the risk of third-party data exposure, and you can often satisfy data residency requirements (e.g., ensuring EU data stays within EU data centers for GDPR compliance).

Major cloud platforms recognize this need; services like Azure OpenAI and AWS Bedrock now allow hosting powerful models in your region, inside your virtual network. By keeping the AI “close” to your secure databases, you achieve true data sovereignty – sensitive content remains under your governance at all times.

And for organizations seeking even tighter control, open-source models offer an additional layer of flexibility. Many smaller models can be optimized to run directly on local machines, including high-performance laptops, edge devices, and on-prem servers. This local-first approach keeps all inference inside your physical environment, eliminating cloud dependencies entirely. It is particularly valuable for industries with strict confidentiality requirements, air-gapped networks, or environments where the cloud is simply not an option.

Beyond local execution, open-source models also support deeper security customization. Because the underlying architecture and weights are accessible, security teams can harden the system with tailored safeguards—such as custom logging, permission layers, encryption standards, red-team testing, or sandboxing mechanisms. This level of transparency is impossible with closed, black-box APIs and gives enterprises confidence that the model behaves exactly as intended.

In short, whether deployed in a VPC, on-prem, or even directly on a workstation, domain-specific and open-source models allow businesses to achieve maximum control, privacy, and compliance—ensuring that AI operates entirely within the boundaries of their security and governance frameworks.

Compliance by Design

Private deployment of DSLMs dovetails with compliance in several ways. First, you can enforce encryption and access controls rigorously. Best practices include encrypting data at rest and in transit, isolating the model on secure subnets, and using strong authentication for any access. This means even if someone intercepted the data flow, they’d get gibberish, and only authorized apps or users can query the model.

Second, running the model in-house makes it easier to maintain audit logs and traceability for all AI interactions – a must in regulated sectors. You can log every query and response, who made it, and what data was accessed, creating an audit trail for regulators or internal review. This level of logging is often not available (or not as transparent) with third-party APIs.

Additionally, a DSLM can be trained only on data you provide – and you can scrub that data of any personal identifiers not needed for the AI task. That means the model itself doesn’t “know” any PII that you didn’t explicitly allow. For example, a bank could train a DSLM on transaction descriptions and AML cases but exclude actual account numbers or names from training data. The model will learn patterns of fraud without ever seeing a customer’s full identity. This controlled training process can ensure compliance with data minimization principles. It also prevents the model from memorizing and regurgitating sensitive facts (a flaw seen in some large models that accidentally leaked training data). In effect, you decide exactly what the AI sees and learns, keeping it on a need-to-know diet aligned with privacy regulations.

Meeting Industry Standards

Many industries have specific guidelines like FINRA in finance, HIPAA in healthcare, or GDPR for any data involving EU citizens. A private DSLM can be engineered to comply with these by integrating rules and being deployed within certified infrastructure.

For example, if you deploy an AI in a HIPAA-compliant cloud environment (with proper Business Associate Agreements in place), and you ensure it doesn’t output disallowed content, you can use AI on patient data while staying within the lines. This is something companies are actively doing: enterprises are moving to hyperscaler-hosted, private LLMs primarily for data privacy and compliance reasons, to avoid shared infrastructure and maintain full control. Essentially, private DSLMs support stringent mandates by design – they keep data where it should be and handle it how it should be handled.

Finally, encryption plays a vital role. With confidential data, you’d encrypt not just the storage but even the memory (via technologies like confidential computing) and all network communication. Some advanced deployments use hardware security modules or enclave technologies to run the model such that even cloud admins can’t peek at the data. These safeguards are crucial for sectors like government, finance, or defense.

The good news is that because DSLMs often involve smaller models or more limited scope, they’re easier to fit into these secure setups (whereas a giant LLM might be harder to run in a locked-down enclave due to resource constraints).

In summary, DSLMs allow enterprises to embrace AI on their own terms. You get the benefits of powerful language models without handing the keys of the kingdom to an external service. Data stays private, models behave predictably under your policies, and you can demonstrate compliance every step of the way. For a compliance officer or CISO, this is the difference between sleeping at night versus worrying about the next breach or audit finding.

Closing Thoughts

In sectors where precision and trust are paramount, domain-specific AI isn’t just a nice-to-have – it’s rapidly becoming a must-have.

Generic AI models captivated us with their versatility, but businesses have learned that your industry’s challenges demand your industry’s knowledge baked into the solution. Specialized intelligence, in the form of custom-trained DSLMs, delivers higher accuracy, contextual understanding, and compliance readiness that general models simply can’t match.

Legal partners can delegate tedious contract analysis to an AI that never misses a clause. Healthcare executives can deploy clinical assistants that actually align with medical standards and patient privacy.

Compliance officers can finally get AI that adheres to the rulebooks, instead of constantly breaking them. All of this translates to saved time, reduced risk, and ultimately better service outcomes – whether it’s a safer patient diagnosis, a prevented fraud, or a contract executed without a hitch.

As you consider integrating AI into your business, ask yourself: Can I afford AI that “wings it,” or do I need AI that knows? For high-stakes domains, the answer is clear.

Embracing custom LLM development for specialized industries means investing in expert AI that speaks your language – literally and figuratively. It’s about hiring a tireless, ultra-informed team member that never goes off-script. In the competitive and heavily regulated landscape of today, such Specialized Intelligence could be the edge that propels your business forward while keeping you squarely within the lines of accuracy and compliance.

The one-model-fits-all era is fading, and the age of the domain expert AI has begun. Now is the time to evaluate where a DSLM can make a difference in your organization – because the best AI for your business might just be the one you train for yourself.

Build Your Own Domain-Specific AI

If you’re ready to move beyond generic AI and deploy a model that truly understands your industry, we can help.

Our team designs and develops custom, secure Domain-Specific LLMs for law, finance, healthcare, and other high-stakes sectors—built around your data, your compliance needs, and your infrastructure.

Book a FREE strategy call and let’s build the specialized intelligence your business deserves.

Frequently Asked Questions

What is a Domain-Specific Language Model (DSLM)?

A DSLM is a customized AI model trained on industry-specific data, terminology, and rules. Unlike general-purpose models, DSLMs are optimized to understand the exact language, workflows, and compliance requirements of a particular field such as law, finance, healthcare, or pharmaceuticals.

How is a DSLM different from a general LLM like ChatGPT?

General models are trained on broad public internet data and may “sound right” without being accurate. DSLMs are trained on curated domain data, dramatically reducing hallucinations and improving precision. They also incorporate built-in compliance constraints, making them more reliable for high-stakes tasks.

Why do regulated industries need DSLMs?

Sectors like law, finance, and healthcare face serious consequences for incorrect, invented, or non-compliant outputs. A single AI mistake can trigger regulatory fines, data breaches, or patient harm. DSLMs ensure accuracy, adhere to industry rules, and produce outputs that align with compliance frameworks.

Can DSLMs eliminate hallucinations completely?

No AI model can eliminate hallucinations entirely, but DSLMs significantly reduce them by relying on vetted, domain-specific datasets instead of generalized internet knowledge. Guardrails and rule-based logic further minimize risk, making them far safer for regulated environments.

How secure are domain-specific AI models?

DSLMs can be deployed privately—in your VPC, private cloud, or on-prem servers—ensuring that data never leaves your controlled environment. This allows full data sovereignty, encryption, audit logging, and compliance with HIPAA, GDPR, FINRA, and other regulatory frameworks.

Can a DSLM run on local machines or on-prem infrastructure?

Yes. Many optimized open-source models are small enough to run on high-end laptops, local servers, or air-gapped systems. This is ideal for organizations that require strict data isolation or cannot use public cloud environments for privacy or regulatory reasons.

What kind of data is needed to train a DSLM?

Training typically uses proprietary, high-quality domain data such as contracts, policies, medical guidelines, transaction patterns, SOPs, or compliance documents. The more structured and vetted the dataset, the more accurate and trustworthy the model becomes.

How long does it take to build a DSLM?

Timelines vary depending on model size, data preparation, evaluation, and deployment requirements. Most business-ready DSLMs can be delivered in 6–12 weeks, though advanced projects may take longer depending on integration needs and security constraints.

Can DSLMs integrate with existing software systems?

Yes. DSLMs can be embedded into internal tools, workflows, and APIs, connecting seamlessly with contract systems, CRM platforms, EMR systems, risk engines, or AML workflows. Their private deployment model makes integration easier and more secure.

What are the most common use cases for DSLMs?

Common applications include:

- Automated legal document review

- AML and fraud detection

- Risk scoring and compliance monitoring

- Medical diagnosis support and triage

- Pharma document QC and regulatory alignment

- Contract summarization and clause extraction

Internal knowledge assistants trained on proprietary data