Most enterprises face a ‘Knowledge Gap’: their LLMs know everything about the world, but nothing about their customers.

To bridge that gap, leaders must choose between Retrieval-Augmented Generation (RAG) for real-time accuracy and Fine-Tuning for specialized behavior.

This guide breaks down the technical trade-offs, and which strategy fits your AI roadmap.

What is RAG?

RAG is an architecture that feeds external information into an LLM’s prompts. When the model receives a query, it first retrieves relevant documents or data chunks from a company’s database (often using a semantic search on a vector database).

Those retrieved texts are appended to the user’s question, and the LLM then generates an answer using this enriched context.

In practice, a RAG system requires building a data pipeline to index documents and compute embeddings, but it avoids changing the LLM’s own weights.

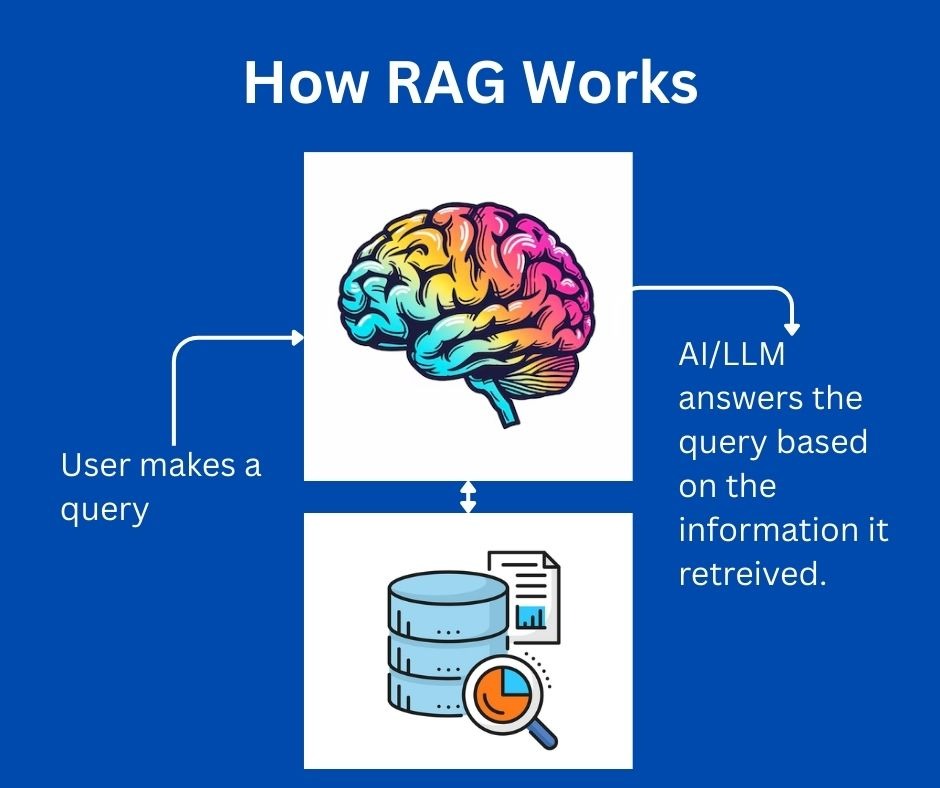

How RAG Works

A RAG query typically follows three steps:

- Search a knowledge base for relevant information (using vector/semantic search).

- Attach the retrieved context to the prompt (augmenting it with up-to-date content).

- Let the LLM generate a response grounded in those sources.

That approach grounds answers in factual data and allows the model to cite or reference specific documents.

Benefits of RAG

RAG keeps the core model fixed, so you can update answers simply by adding or revising documents.

It keeps answers current because the LLM will incorporate the latest company policies or data without any retraining.

Since responses are drawn from real documents, RAG greatly reduces hallucinations (made-up answers) and improves auditability. It also offers strong data control because proprietary information stays in a secured database and is only used at query time, which helps with compliance.

Challenges of RAG

Building a RAG system involves engineering effort.

You must ingest and index your data (e.g. into a vector DB) and maintain that pipeline. Each query incurs the extra step of retrieval, so RAG can be slower or more resource-intensive at runtime than a standalone model.

In high-volume settings, you may need caching or more compute to keep latency low.

Finally, RAG is limited by the LLM’s context window: the retrieved text and the question together must fit within the model’s input length.

Challenges We Faced With a Rag System We Recently Built

We recently built a full editorial team for a client to manage their crypto blog. The process included finding news, writing articles based on it, checking the article for any factual inaccuracies or hallucinated facts, and then posting everything to Google Docs for a human editor to perform a final check on each article.

The RAG system we built in was to ensure a certain content structure. So lead, paragraphs, insights, etc. in each article. We also made the system pull recent news that we then classified with AI to see if it could be related. If yes, this was included in the RAG pipeline.

Building the RAG system was fairly straightforward. However, the system was somewhat slow during runtime. It would take about 5 minutes to search and compile news for each article. We could have cut this time in half had we both copied in news that might have been related instead of getting the AI to search and classify possible related news on every run for every article, and if we had fine-tuned the model to know what content structure is desired, etc. Still, the system slashed costs and the time it took to push news out had a human done it.

Another issue we would have some times related to context window restrictions that we would face if too much data was passed through.

What is Fine-Tuning?

Fine-tuning is the process of further training a pre-trained LLM on a specialized dataset so it “remembers” domain-specific knowledge.

In practice, you collect examples (e.g. product descriptions, help transcripts, compliance documents) and run additional training passes on the model.

The model’s internal weights are adjusted so it internalizes your business terminology, style, and reasoning patterns.

Fine-tuning can be done on all parameters or using parameter-efficient methods (like LoRA/Q-LoRA) to reduce compute.

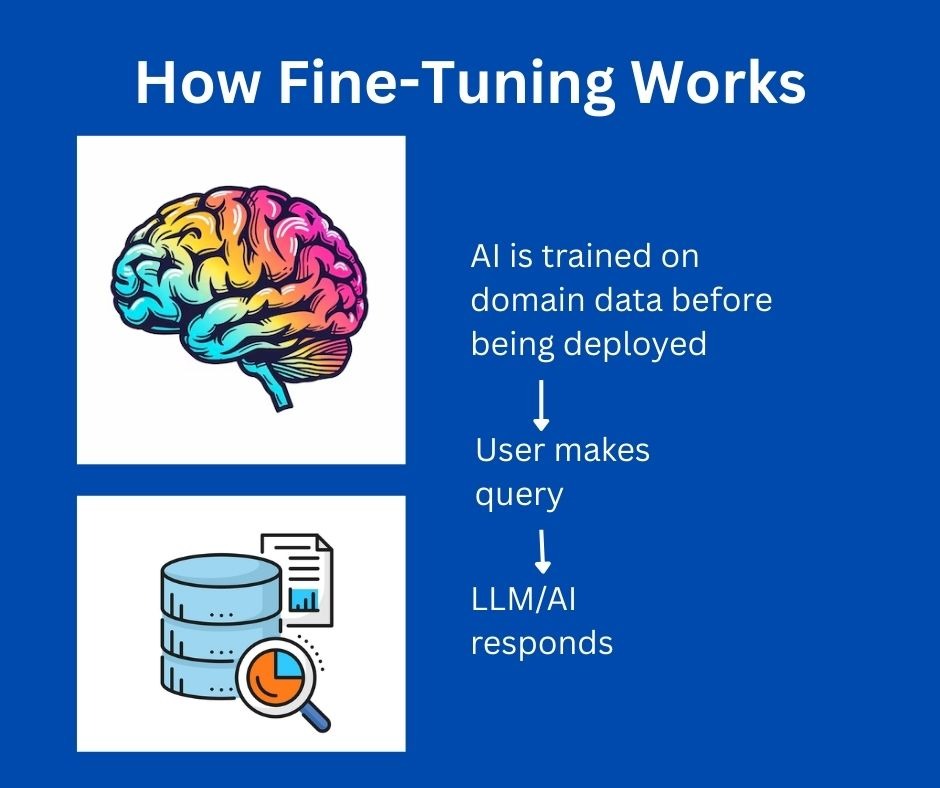

How Fine-Tuning Works

First, you assemble and preprocess a large labeled dataset from your company’s content.

Then the LLM is trained (often with GPUs or TPUs) on that data, “learning” to produce the desired output patterns. The trained model is evaluated and deployed and no longer needs external retrieval at runtime, since the knowledge is baked into its parameters.

Benefits of Fine-Tuning

A fine-tuned model develops deep domain expertise.

It gains inherent understanding of specialized terms and tasks that go beyond generic LLM capabilities.

For example, a fine-tuned medical chatbot might correctly interpret “PT services” as “physical therapy services” by virtue of its training.

Fine-tuning also allows strict style and format control: the model can be trained to write in your company’s tone or produce exactly formatted outputs.

Additionally, during inference, fine-tuned models are efficient: they only use their own memory, so inference can be faster (lower token count, no retrieval) and easier to scale for high-volume, repetitive queries.

Challenges of Fine-Tuning

One of the major challenges of fine-tuning AI models is that the cost can be high.

That’s because you need substantial compute resources (powerful GPUs/TPUs) and expert ML engineers to collect/clean labeled data and run training jobs.

Once trained, a fine-tuned model’s knowledge is static: it reflects the data snapshot at training time. If business rules or data change, you must retrain the model to incorporate updates.

Fine-tuned models can also “forget” older knowledge (catastrophic forgetting) if training isn’t managed carefully.

Finally, because the model relies on its internal memory, it cannot cite sources or provide traceability for its answers. This is a potential drawback in regulated industries.

Use Cases in Key Industries

Below are some of the use cases of RAG and fine-tuning in some key industries.

Finance

In fast-moving markets, RAG lets an AI fetch real-time financial data and regulations for answers.

For example, a financial chatbot using RAG could look up the latest stock prices, compliance rules, or client portfolio information in its knowledge base before responding.

Fine-tuning in finance is suited for highly specialized tasks. For example, one firm might fine-tune a model on decades of internal financial reports and accounting rules to ensure it generates precise earnings summaries or risk assessments.

Healthcare

RAG can help keep medical AI tools current by retrieving patient records, recent research, or drug databases at inference time.

For instance, a clinical assistant could use RAG to fetch up-to-date treatment guidelines or patient lab results when answering a doctor’s query.

Meanwhile, fine-tuning is valuable in healthcare for learning medical terminology and context: training an LLM on anonymized patient records, textbooks, and clinical notes enables it to “think” like a medical expert.

E-commerce

Retail companies often need AI that can recommend products and answer questions about inventory.

A RAG-based assistant might dynamically pull current inventory and pricing from the store database, so it always knows what’s in stock.

Fine-tuning, on the other hand, can make the AI adopt a brand voice or specialize in tasks like writing product descriptions or handling checkout dialogues.

For example, an online retailer could fine-tune a model on its past customer service chats so that the AI replies with consistent style and terminology aligned to the brand.

Comparison: Benefits and Trade-offs

Business leaders must weigh several factors when choosing RAG, fine-tuning, or both, Below are some things to take into consideration when choosing the best approach, as well as the strengths and weaknesses of each.

Data Freshness & Accuracy

RAG shines with constantly changing data.

By retrieving the latest documents or records for each query, it ensures answers reflect current facts. This greatly reduces the risk of outdated or hallucinated responses.

In contrast, a fine-tuned model has “frozen” knowledge. It can perform exceptionally well on known scenarios, but if new information emerges (a new policy or product), its answers may be stale until you retrain.

Cost & Scalability

Fine-tuning requires significant upfront investment: expensive compute for training and effort to prepare large labeled datasets.

Once trained, though, inference is efficient. RAG has a lower up-front cost (no training cycle) and lets you leverage existing data stores.

However, RAG adds overhead on every query (running a search across the knowledge base), so it can be more costly or complex at runtime.

In general, fine-tuning is “capital-intensive” but can scale inference cost-effectively, while RAG has a quicker deployment but higher ongoing compute per query.

Performance & Latency

A fine-tuned model responds using its internal knowledge, so it only needs the LLM itself at runtime. This often means lower latency and predictable performance.

One advantage to keep in mind is that a fine-tuned model will have a shorter prompt (just the query) and thus faster inference and lower cost at high volume.

Meanwhile, RAG adds steps. Every query must trigger a search in the database. If not carefully optimized, RAG systems can be slower and harder to tune for low-latency production environments.

Data Security & Compliance

RAG provides an advantage for sensitive data. Because proprietary documents stay in a local database and are only accessed at query time, you maintain full control over what data the AI sees.

That is ideal in regulated industries (finance, healthcare) where it’s critical to audit and restrict data access. Fine-tuning, by contrast, involves embedding data into the model’s parameters.

If the model is hosted externally, that can raise privacy concerns unless the data is anonymized and the service is trusted.

Maintenance & Updates

RAG systems can be more agile to maintain because adding or correcting documents immediately affects answers. For example, fixing a policy in the knowledge base makes that correction available instantly.

On the other hand, fine-tuned models require deliberate retraining for updates. If you need to reflect a change (new regulations, new product lines), the fine-tuned model must be re-trained on the updated data, which can be time-consuming and costly.

Over time, businesses often find that combining approaches (fine-tune for core expertise and RAG for changing data) yields the best balance of precision and freshness.

Frequently Asked Questions

What is the main difference between RAG and fine-tuning?

RAG augments an LLM at query time by retrieving and appending business data, while fine-tuning involves retraining the model on domain data. RAG does not change the model’s weights, whereas fine-tuning updates the model so that knowledge is internalized.

Are RAG and fine-tuning mutually exclusive?

No. They solve different problems and can complement each other. In fact, a hybrid “retrieval-augmented fine-tuning” approach (sometimes called RAFT) is often recommended. For example, you might fine-tune a model for brand tone and task format, then use RAG to supply real-time facts.

Is one approach always cheaper than the other?

It depends on the scenario. Fine-tuning usually requires a bigger upfront budget: gathering/labeling training data and running expensive training jobs. Once done, inference costs are modest. RAG is faster to implement (no training needed), but it incurs runtime costs for search and larger prompts. In general, fine-tuning is costlier to set up, whereas RAG spreads cost into ongoing operations.

Which is better for protecting sensitive data?

RAG is often safer for confidential information. In a RAG setup, private documents live in your secured database and are only accessed when needed. The LLM itself never “sees” this data directly. Fine-tuning would require putting the data (even indirectly, via embeddings) into the model training process, which can be a security concern.

Can fine-tuned models hallucinate or go out of date?

Yes. A fine-tuned model can still produce incorrect answers if asked about something outside its training. Since its knowledge is fixed at training time, it will hallucinate if the question involves new facts it never saw. RAG helps mitigate hallucination by explicitly grounding answers in retrieved documents.

How often should I update each system?

For RAG, you should regularly curate the knowledge base (add new documents, remove outdated ones). No retraining is needed unless you change the model itself. For fine-tuning, every significant change in your domain data usually means you need another fine-tuning cycle. In fast-changing fields, that can be frequent; otherwise it might be periodic (e.g. quarterly) to stay current.

Do I need ML experts to use these?

RAG generally requires engineering skills to build the retrieval pipeline (data ingestion, vector search, etc.) but less deep AI expertise at runtime. Fine-tuning requires experienced ML engineers to prepare data, select hyperparameters, and train models. Many organizations start with RAG (easier to implement) and then bring in fine-tuning for areas that need extra specialization.

{ “@context”: “https://schema.org”, “@type”: “FAQPage”, “mainEntity”: [ { “@type”: “Question”, “name”: “What is the main difference between RAG and fine-tuning?”, “acceptedAnswer”: { “@type”: “Answer”, “text”: “RAG augments an LLM at query time by retrieving and appending business data, while fine-tuning involves retraining the model on domain data. RAG does not change the model’s weights, whereas fine-tuning updates the model so that knowledge is internalized.” } }, { “@type”: “Question”, “name”: “Are RAG and fine-tuning mutually exclusive?”, “acceptedAnswer”: { “@type”: “Answer”, “text”: “No. They solve different problems and can complement each other. In fact, a hybrid “retrieval-augmented fine-tuning” approach (sometimes called RAFT) is often recommended. For example, you might fine-tune a model for brand tone and task format, then use RAG to supply real-time facts.” } }, { “@type”: “Question”, “name”: “Is one approach always cheaper than the other?”, “acceptedAnswer”: { “@type”: “Answer”, “text”: “It depends on the scenario. Fine-tuning usually requires a bigger upfront budget: gathering/labeling training data and running expensive training jobs. Once done, inference costs are modest. RAG is faster to implement (no training needed), but it incurs runtime costs for search and larger prompts.” } }, { “@type”: “Question”, “name”: “Which is better for protecting sensitive data?”, “acceptedAnswer”: { “@type”: “Answer”, “text”: “RAG is often safer for confidential information. In a RAG setup, private documents live in your secured database and are only accessed when needed. The LLM itself never “sees” this data directly. Fine-tuning requires putting the data into the model training process, which can be a security concern.” } }, { “@type”: “Question”, “name”: “Can fine-tuned models hallucinate or go out of date?”, “acceptedAnswer”: { “@type”: “Answer”, “text”: “Yes. A fine-tuned model can still produce incorrect answers if asked about something outside its training. Since its knowledge is fixed at training time, it will hallucinate if the question involves new facts. RAG helps mitigate hallucination by explicitly grounding answers in retrieved documents.” } }, { “@type”: “Question”, “name”: “How often should I update each system?”, “acceptedAnswer”: { “@type”: “Answer”, “text”: “For RAG, you should regularly curate the knowledge base (add or remove documents) without retraining. For fine-tuning, every significant change in your domain data usually requires another fine-tuning cycle to stay current.” } }, { “@type”: “Question”, “name”: “Do I need ML experts to use these?”, “acceptedAnswer”: { “@type”: “Answer”, “text”: “RAG requires engineering skills to build the retrieval pipeline but less deep AI expertise at runtime. Fine-tuning requires experienced ML engineers to prepare data, select hyperparameters, and manage the training process.” } } ] }