Ever wonder how a machine learning model actually learns?

The answer often lies in a simple but powerful algorithm called gradient descent.

In machine learning, gradient descent is one of the most important techniques for optimizing models – it’s how models adjust their internal parameters to get better at making predictions.

This article will explain what gradient descent is, how it works, and how machine learning uses gradient descent.

We’ll also cover different types of gradient descent, use real-world analogies for intuition, discuss challenges like learning rates and local minima, and answer some common beginner questions. Let’s dive in!

What Is Gradient Descent?

Gradient descent is basically an optimization algorithm that helps a model improve by minimizing its error.

In plain English, it’s a method of tweaking a model’s parameters (like weights in a neural network) to reduce the difference between the model’s predictions and the actual correct values.

The algorithm does that iteratively: it evaluates how far off the model is, then adjusts the parameters step-by-step in the direction that makes the model a little more accurate (the direction of steepest decrease in the “loss” or error).

Over many iterations, the small adjustments add up, and the model’s error gets smaller and smaller, ideally reaching a minimum.

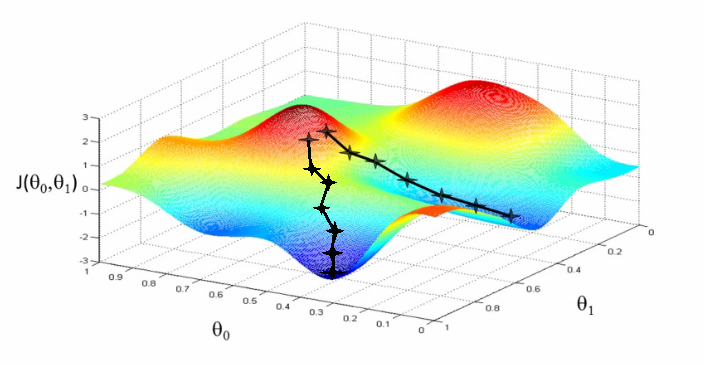

Think of the loss function (also called a cost function) as a landscape of hills and valleys. The height of the landscape at any point represents the model’s error (loss) for a particular set of parameters.

Gradient descent is like a hiker trying to find the lowest valley in this foggy landscape. The hiker (the algorithm) can’t see the entire landscape due to fog (it doesn’t know where the absolute lowest point is at first).

What it can do is feel the slope of the ground underfoot (compute the gradient) and take a step in the steepest downward direction – that is, move in the direction where the ground slopes down most sharply.

Illustration of how gradient descent looks

By repeatedly taking steps downhill, the hiker will descend into a valley. In other words, gradient descent continuously adjusts the model parameters in the direction that decreases the loss the fastest, gradually guiding the model toward the point of minimum error.

This process of “stepping downhill” is essentially how machine learning models learn from data. Gradient descent is central to modern machine learning, used in everything from simple linear regression to deep neural networks.

It’s the workhorse that tunes the model’s parameters so that the model’s predictions become as accurate as possible. Without gradient descent (or a similar optimization algorithm), a complex model would have no practical way to automatically improve itself by learning from training data.

How Gradient Descent Works (Step by Step)

Now that we know the intuition behind gradient descent, let’s break down how it works in a simple step-by-step process.

Imagine we have a machine learning model with some parameters (numbers the model uses to make predictions). Gradient descent will iteratively adjust these numbers to reduce the model’s error:

- Start with Initial Parameters: We begin by guessing initial values for the model’s parameters. Often they are set randomly or to small numbers (for example, weights in a neural network might start near zero). This is our starting point in the error landscape.

- Calculate the Current Loss: Using the current parameter values, we let the model make predictions on our training data and then calculate the loss (error) of those predictions. The loss function gives us a numerical value for how “wrong” the model currently is. For instance, in a regression problem we might use Mean Squared Error to measure the difference between predicted values and actual values.

- Compute the Gradient (Slope): Next, we figure out the gradient of the loss with respect to the model’s parameters. The gradient is essentially a vector of partial derivatives – in simpler terms, it tells us the direction in which the loss increases the most. It’s like checking the slope of the terrain in each direction. If the gradient is positive for a parameter, it means increasing that parameter would increase the loss; if it’s negative, increasing the parameter would decrease the loss. This computation is the “sophisticated instrument” our hiker uses to gauge the steepness of the hill.

- Adjust the Parameters (Move Opposite the Gradient): We then update each parameter in the opposite direction of the gradient. In other words, we move downhill in the loss landscape. We take a small step proportional to the gradient’s magnitude – this step size is controlled by a factor called the learning rate (more on this soon). For example, if a weight has a gradient of +0.5 (indicating the loss would increase if the weight increases), we decrease that weight a little. If another weight has a gradient of -0.3, we increase that weight slightly (because a negative gradient means moving it up will reduce the loss). After this step, the model should hopefully have a slightly lower loss than before, since we moved it in the direction that reduces error.

- Repeat Until Convergence: The above steps (calculate loss -> compute gradient -> update parameters) are repeated over and over. With each iteration, the model’s parameters (hopefully) get closer to the values that minimize the loss. We keep iterating until a stopping condition is met. Common stopping criteria are: the change in loss becomes very small (meaning the improvements are negligible), or we hit a maximum number of iterations. When further iterations don’t significantly reduce the loss, the model is said to have converged – it has essentially found the set of parameters that produce the lowest achievable loss on the training data.

This iterative process gradually nudges the model toward better performance.

In the best case (for a nicely shaped convex loss landscape), gradient descent will find the global minimum of the loss function – the absolute lowest point, which means the model’s error is as low as possible.

In more complex scenarios, it might end up in a local minimum (a low point that isn’t the absolute lowest, more on that later).

Either way, gradient descent usually gets the model to a good place where the error is much smaller than where we started.

Key point: Gradient descent turns the abstract problem of “find the best model parameters” into a concrete procedure of repeatedly taking small steps to reduce error. It’s like the model is learning by trial and error – each step is a slight tweak to see if it makes things better or worse, and the gradient is the guide telling it which direction is “better” (i.e., lowers the error).

Types of Gradient Descent

There isn’t just one way to do gradient descent.

In practice, there are a few variants of gradient descent that differ in how much data they use to compute the gradient at each step. The goal is always the same – adjust parameters to reduce loss – but these types balance speed and accuracy in different ways:

- Batch Gradient Descent: This version uses the entire training dataset to compute the gradient and update the parameters for each step. That means after each full pass through all the training examples (called an “epoch”), it adjusts the parameters once. Batch gradient descent is straightforward and the gradient calculation is very accurate (since it considers all data each time), but it can be slow for very large datasets because you have to evaluate every data point before each update. It’s like taking a very measured step using complete information, which is reliable but can be time-consuming.

- Stochastic Gradient Descent (SGD): In the purest form of SGD, the model updates the parameters using one random training example at a time. Instead of waiting to look at the whole dataset, you pick one example, compute the loss and gradient for that single example, and immediately adjust the parameters. This makes updates very fast and frequent – you’re essentially taking many quick, small steps using minimal information. The downside is that each step’s direction is a bit noisy or unreliable (one example might suggest a direction that’s not universally optimal). As a result, the loss might fluctuate up and down a bit from step to step, and the path toward the minimum can be a bit zig-zag. However, SGD often still converges faster in wall-clock time for large datasets because it doesn’t waste time computing gradients on the entire dataset for every step.

- Mini-Batch Gradient Descent: This approach is a compromise between batch and stochastic gradient descent. Instead of using all data or just one example, we use a small batch of examples (e.g. 32, 64, or 128 samples) to compute each gradient and update. Mini-batch gradient descent combines the advantages of both: it’s more stable and accurate per step than pure SGD (because averaging over a batch reduces noise), but it’s faster and more scalable than full batch (because each update is based on only a fraction of the data). It also takes advantage of modern hardware (like GPUs) which can process batches efficiently in parallel. In fact, when people casually say “SGD” in deep learning, they often really mean mini-batch gradient descent with a reasonably sized batch (pure one-example-at-a-time SGD is less common in practice).

In summary, batch uses all data each step (steady but potentially slow), stochastic uses one data point (fast but noisy), and mini-batch uses a handful of data points (a balanced approach). All three are based on the same principle of following the gradient downhill, just with different trade-offs in efficiency and stability.

How Machine Learning Uses Gradient Descent

At its core, machine learning is about adjusting a model’s internal parameters so that its predictions become more accurate over time. Gradient descent is the mechanism that makes this adjustment possible.

When a machine learning model processes training data, it produces predictions. These predictions are then compared to the correct answers, and the difference between them is calculated using a loss function. This loss represents how wrong the model currently is.

Gradient descent uses this loss to guide the learning process. It calculates the gradient, which shows how the model’s parameters should change to reduce the error. The algorithm then adjusts those parameters slightly in the direction that decreases the loss.

This process repeats thousands or even millions of times during training. With each iteration, the model becomes a little more accurate because its parameters are constantly being nudged toward better values.

In simple terms, gradient descent is the feedback loop that allows machine learning models to improve themselves. Without it, the model would make predictions, but it would have no way to learn from its mistakes.

Challenges and Key Considerations in Gradient Descent

Gradient descent is a relatively simple idea, but making it work well in practice involves a few important considerations. Two big ones are the choice of learning rate and the issue of local minima.

Learning Rate (Step Size)

The learning rate is a crucial hyperparameter that determines how big a step we take with each update. It’s usually a small positive number (like 0.1, 0.01, or 0.001).

If the learning rate is too high, the algorithm might take huge leaps and overshoot the minimum – imagine our hiker taking strides so big that they leap over the valley and keep oscillating or even diverging because they always overshoot the best spot. This can prevent the model from converging, as it keeps overshooting the optimal parameters (the loss might even increase).

On the other hand, if the learning rate is too low, the steps are tiny and progress becomes painfully slow. The model will eventually get there, but it may take an extremely long time to reach a good solution (or you might give up early because it looks like it’s not improving).

Choosing a good learning rate is therefore key: it needs to be large enough to make progress in a reasonable time, but not so large that it destabilizes the training. In practice, people often use techniques like learning rate schedules or adaptive optimizers to adjust the step size during training.

Local Minima (and Saddle Points)

Gradient descent is great at finding a minimum of the loss function, but there’s a catch: the minimum it finds could be a local minimum rather than the global minimum.

Think of a local minimum as a small valley that is lower than its surroundings but higher than the deepest valley in the entire landscape.

Our hiker could get stuck in a foggy mountain lake – a low spot that isn’t the absolute lowest point on the mountain. In terms of machine learning, this means the algorithm might converge to a set of parameters that is good but not the best possible.

Additionally, there are flat areas called saddle points that can slow down progress. The good news is that for many problems (especially convex ones like simple linear regression), the loss surface is nice and smooth with one global valley, so local minima aren’t an issue.

For more complex models like deep neural networks, the loss landscape can be complicated, but in practice gradient descent (with some tweaks) still usually finds pretty good solutions. Techniques like using momentum, adjusting the learning rate, or random restarts can help the algorithm escape local minima or plateaus if needed.

It’s also worth noting that many variants and improvements to basic gradient descent have been developed to address these challenges.

For example, Momentum adds a fraction of the previous update to the current one to smooth out the path and help plow through small bumps in the landscape.

Optimizers like RMSProp and Adam automatically adjust the learning rate for each parameter and combine ideas like momentum and adaptive step sizes. These are all built on the core concept of gradient descent, but with enhancements that often lead to faster or more reliable convergence.

Frequently Asked Questions

Why is gradient descent important in machine learning?

Gradient descent is important because it is the primary way that many machine learning models “learn” from data.

It enables models to automatically improve by minimizing their error. In fact, gradient descent is central to modern machine learning – when you train a neural network, perform linear regression, or build many other models, you’re likely using some form of gradient descent to adjust the model’s parameters.

Without gradient descent (or a similar optimizer), we would have no efficient way to handle models with lots of parameters. It’s essential for training deep learning models and has proven to be scalable and effective across a wide range of tasks.

In short, gradient descent is important because it makes machine learning algorithms practically feasible, finding the model settings that best fit the data.

What is a learning rate in the context of gradient descent?

The learning rate is a small tuning parameter that controls how big each step of the gradient descent update is.

In the update rule, you often see something like new_parameter = old_parameter – learning_rate * (gradient). The learning rate (often denoted by α or η) is the multiplier in front of the gradient that determines the step size.

If you imagine gradient descent as taking steps down a hill, the learning rate is like the length of your stride. A higher learning rate means larger steps, and a lower learning rate means smaller, cautious steps.

Choosing the right learning rate is crucial: it’s essentially a trade-off between speed and stability of learning. Most beginners start with a moderate learning rate and adjust based on whether the training seems to be diverging (too high) or creeping slowly (too low).

What happens if the learning rate is too high or too low?

If the learning rate is too high, gradient descent will take steps that are too large and might overshoot the optimal point repeatedly. This often leads to oscillation or even divergence – the training loss might go down then shoot up, or just fail to decrease consistently, because the algorithm is leaping back and forth across the minimum without settling.

It’s like trying to descend a hill in giant leaps and constantly missing the lowest point. Conversely, if the learning rate is too low, the algorithm will make very slow progress. The loss will decrease, but extremely slowly, and it may take an impractically long time to reach a good minimum (if ever, within a reasonable training time). In practice, you’ll notice this as an almost flat training curve that barely improves.

The key is to find a learning rate in the sweet spot: large enough to converge in a reasonable time but not so large as to cause unstable training. Techniques such as learning rate schedules (gradually reducing the learning rate during training) or adaptive optimizers (which adjust effective learning rates on the fly) are commonly used to handle this issue.

Is gradient descent the only optimization method for training models?

No – gradient descent is very popular, but it’s not the only optimization method available. There are many other optimizers and techniques. Some of them are actually variations or improvements on basic gradient descent.

For example, Momentum, RMSProp, and Adam are optimization algorithms that build upon gradient descent by changing how the learning rate is applied or by accumulating past gradients. These often converge faster or more smoothly than vanilla gradient descent. There are also second-order methods like Newton’s method or L-BFGS that use second derivative information to find minima more directly (though these can be computationally expensive for large models).

Additionally, for certain problems, there are analytical solutions (e.g., the normal equation for linear regression) or other search-based methods (like evolutionary algorithms or simulated annealing), but those are either limited to specific cases or not as efficient for typical machine learning tasks.

Gradient descent (and its variants) remains the workhorse because of its simplicity, efficiency, and broad applicability. It provides a good balance of performance and computational feasibility for training a wide range of machine learning models.

By now, you should have a solid understanding of how machine learning uses gradient descent to train models.

In summary, gradient descent is all about iteratively nudging model parameters in the direction that reduces error, much like finding your way down a foggy mountainside by feeling the slope under your feet. It’s a fundamental algorithm that powers the learning process for countless models in the field of AI.

With a grasp of gradient descent, you’re better equipped to understand what’s happening under the hood when models learn – and you’ll be ready to explore more advanced optimization tricks as you continue your machine learning journey. Good luck, and happy descending!