Agentic RAG systems bring a new level of autonomy to AI, embedding intelligent agents into the standard RAG pipeline.

By combining AI agents with retrieval-augmented generation, these systems can proactively plan multi-step information gathering, use various tools, and dynamically refine their outputs. The result is AI that’s not only backed by up-to-date knowledge, but also capable of reasoning and acting to solve complex tasks on its own.

This article will explain what agentic RAG systems are, how they differ from traditional RAG, their applications across industries, key benefits, and challenges.

What Are Agentic RAG Systems?

Agentic RAG refers to an advanced form of retrieval-augmented generation where AI “agents” are integrated into the RAG pipeline to enable autonomous decision-making.

To unpack that, let’s first recall what RAG means:

- Retrieval-Augmented Generation (RAG): A framework that connects a generative AI model (like a large language model, LLM) with an external knowledge base. Instead of relying solely on pre-trained knowledge, a RAG system retrieves relevant data (e.g. documents, facts) from external sources at query time and feeds it into the LLM’s context. This process augments the model’s generation with up-to-date, domain-specific information. In a typical RAG pipeline, a user query is embedded and matched against a vector database to fetch related documents, which are then combined with the query for the LLM to generate a response. This makes answers more accurate and current without needing to retrain the model.

- Agentic AI / AI Agents: An AI agent is an AI (often an LLM) endowed with the ability to plan actions, use tools, and make decisions autonomously. Unlike a fixed chatbot that simply responds, an agent has a form of memory (to recall context and past interactions), can perform step-by-step reasoning, and can call external tools or APIs to accomplish tasks. In essence, an agent can figure out how to answer a complex query by breaking it into subtasks, retrieving information, calling functions, and so on – much like a human assistant might.

Agentic RAG systems combine these ideas. In an agentic RAG, one or more AI agents are embedded into the retrieval-generation loop. Instead of a one-shot retrieve-and-answer, the agent can actively manage the retrieval process: it may reformulate queries, decide which knowledge source or tool to consult, retrieve more context if needed, and validate or refine the results. This makes the pipeline far more adaptive than a traditional RAG.

The agent essentially orchestrates the RAG components, often through patterns like reflection (self-checking), planning, and even collaboration with other agents. By doing so, agentic RAG systems can handle complex, multi-step tasks and deliver more context-aware and even personalized outputs.

Put simply, an agentic RAG system is a RAG-powered AI assistant that can think for itself about how to find and use information. It’s not limited to a single database or a single retrieval step. The agent can work through a problem, using whichever data and tools are necessary until it reaches a well-supported answer.

Agentic RAG vs. Traditional RAG

Agentic RAG builds on RAG’s strengths (grounding LLM responses in external knowledge) while addressing its limitations.

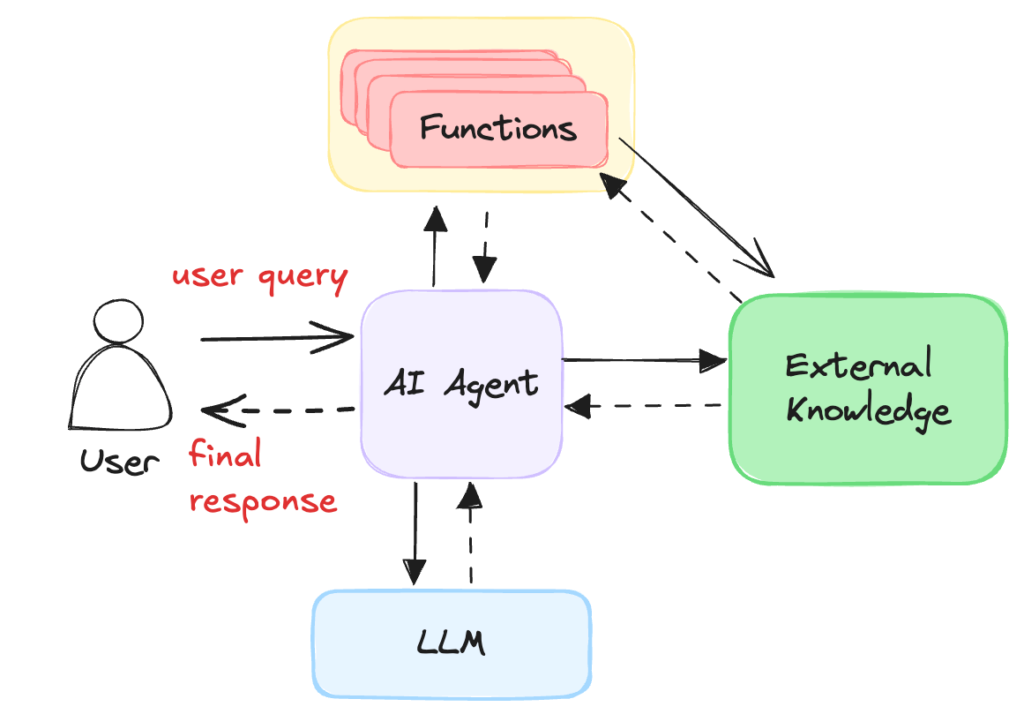

High level overview of how AI RAG systems work (Source: Vectorize)

Here are key differences and improvements agentic RAG offers over traditional RAG:

- Flexibility in Data Sources: Traditional RAG connects an LLM to one knowledge source at a time (e.g. a single vector database of documents). In contrast, agentic RAG can pull data from multiple knowledge bases and tools. An agent can choose among various databases, perform web searches, use APIs, etc., all within one session. This means a single query could be answered using a combination of proprietary documents, public web data, calculators, or other tools as needed – something static RAG can’t do.

- Adaptability and Reasoning: A standard RAG pipeline is fairly reactive – it takes your query, retrieves matching info once, and generates a reply. It doesn’t adapt if the context is insufficient or if the query has multiple parts. Agentic RAG, however, introduces intelligent problem-solving. The agent can perform multi-step reasoning: for example, first searching a database, then asking a follow-up question or querying another source based on what it found. It can adjust its strategy if the initial results aren’t good, rather than requiring extensive prompt engineering by the user. In essence, agentic RAG turns a static lookup into a dynamic, iterative search process.

- Improved Accuracy and Self-Optimization: Traditional RAG doesn’t inherently verify whether the retrieved info fully answers the question – it just passes it to the LLM. Any optimization or validation is left to the user. Agentic RAG agents, on the other hand, can check and refine their results. They might notice if an answer seems incomplete or contradictory and then loop back to retrieve more context. Over multiple iterations, an agent can learn which sources tend to be more useful, thus optimizing results over time. This iterative checking can also reduce glaring errors.

- Scalability and Multi-Agent Collaboration: With agent frameworks, you can deploy networks of agents that handle different tasks in parallel. For instance, one agent might specialize in technical documentation while another handles financial data. They can work together and even verify each other’s outputs. This multi-agent approach allows the system to scale to more complex queries by dividing the labor. Traditional RAG is essentially single-threaded by comparison.

- Multimodal and Tool Use: Modern agentic RAG systems can incorporate multimodal data and various tools. Agents aren’t limited to text retrieval; they could, for example, analyze an image or call a mapping API if the task calls for it. Many new LLM-based agents can handle text, code, images, or audio. Traditional RAG mostly deals with text knowledge bases and outputs text. By leveraging tools (search engines, calculators, code interpreters, etc.), an agentic RAG system can solve a wider range of queries (like producing a chart from data, executing a live calculation, or querying an email inbox).

A helpful analogy is to think of a traditional RAG system as a diligent employee who will fetch exactly what you ask for, but only what you explicitly specify. Meanwhile, an agentic RAG system is like a proactive team member that, when given a problem, will figure out the steps to solve it – searching multiple file cabinets, consulting experts (tools), double-checking answers – ultimately coming back with a well-thought-out solution.

It’s important to note that these enhancements come at the cost of added complexity (which we’ll discuss in challenges). Nevertheless, in scenarios where static pipelines fall short, agentic RAG brings smarter, context-aware AI to the table.

Practical Applications Across Industries

Agentic RAG systems are versatile and can be applied anywhere we need AI to handle dynamic, knowledge-intensive tasks. Here are a few prominent use cases across different domains.

Software Development and DevOps

In software engineering, agentic RAG can act as an intelligent coding assistant, dramatically improving developer productivity. Such an agent can integrate with codebases, documentation, and testing frameworks to help with various tasks:

- Code Generation and Refactoring: An agentic RAG can generate boilerplate code or even entire functions based on a natural language request, pulling in relevant API usage examples from documentation. It can also suggest refactoring by retrieving best practice snippets from a knowledge base. This helps developers automate tedious coding tasks and adhere to standards.

- Automated Code Review: By retrieving style guides and past code review comments, the agent can analyze new code changes for potential bugs or violations. It then provides feedback or corrections, effectively serving as an AI code reviewer. It identifies issues and suggests improvements using both the project’s context and general programming knowledge.

- Testing and Debugging: Agentic RAG can create unit tests and integration tests by studying the code and documentation. For debugging, it can search error logs, relevant commit history, or support forums, then guide the developer to the likely fix. Tools like LangChain and LangGraph have been used to build agents that analyze code (even parsing syntax trees) and run tests automatically.

- Knowledge Retrieval for Devs: The agent serves as a smart documentation bot – instead of manually searching through wikis or issue trackers, a developer can ask a question and the agent will pull answers from design docs, GitHub issues, Stack Overflow, etc. For example, it might find how a certain API was used previously in the codebase. This means less time hunting through documentation, more time writing code.

Overall, in a dev team, an agentic RAG system becomes a context-aware co-pilot that writes, reviews, and debugs code by tapping into all available knowledge. This leads to faster development cycles and higher code quality.

In one project that we worked on, we built a full coding team made up of several agents that each had their own roles. With this system, a high-level overview agent would take in a request from a user to build a certain app. This agent would then come up with 3-5 questions to gain a better understanding of what the user wanted. This wasn’t necessary, but we felt this would reduce the possibility of scope creep by essentially narrowing the focus.

Thereafter, the high-level overview agent would draft a project spec and pass it on to a coordinator agent, which takes the spec and allocates resources. In this case, the resources were other agents that each focused on a specific part of software development. There was one for backend development, one for the frontend creation, as well as a QA agent that would come up with and perform unit tests on the written code.

That last agent was responsible for ensuring the code met the requirements in the spec drafted by the high-level overview agent. If tests failed or it found something wrong, it would loop back to the appropriate development agent/agents and get them to make the changes or fixes. This process would be a loop until the requirements were met, according to the QA agent.

Customer Service and Support

Customer service bots and support assistants greatly benefit from agentic RAG’s ability to handle multi-step queries and integrate with business data:

- Advanced Chatbot Assistants: Instead of a basic FAQ bot, an agentic RAG support agent can hold a conversation with a user, retrieve personalized information, and even take actions. For instance, when a customer asks about an issue with their account, the agent can fetch account details, knowledge base articles, and troubleshoot procedures across multiple systems in real time. It might pull recent order data from a database, check for known issues in a support ticket repository, and then provide a solution or form to request further help.

- Ticket Triage and Routing: In a helpdesk scenario, the agent can read incoming support tickets or chats, determine what product or issue they relate to, and automatically route them to the right team or knowledge category. It classifies and prioritizes issues using both keywords and learned patterns. This automation speeds up response times.

- Multistep Issue Resolution: Agentic RAG support systems can handle multi-layered customer inquiries. For example, say a user is having trouble with a software product. The agent can ask clarifying questions, run a diagnostic tool or query logs via API, cross-reference documentation for that error code, and either give the fix or escalate to a human with a summary of findings. Because it retains conversational context, the agent remembers what the customer has tried already and adapts its next steps.

- Personalized Service: Since agents have short-term memory of the interaction and can access user-specific data, they tailor responses to the individual. The agent might greet the user by name, recall past issues they had, and avoid repeating information the user has already provided. This creates a more personalized support experience compared to one-size-fits-all answers.

In essence, agentic RAG turns support bots into autonomous customer service reps that can intelligently navigate company knowledge and user data to resolve queries. Businesses like call centers, e-commerce platforms, and SaaS providers are exploring these to reduce load on human agents while maintaining quality service.

Research and Knowledge Work

For any industry involving heavy research or data analysis – from finance to legal to academia – agentic RAG can function as an on-demand research assistant:

- Legal and Regulatory Research: Law firms can employ agentic RAG to sift through vast libraries of case law and regulations. An agent can search for relevant precedents, cross-check statutes, and even trace citation networks across documents. Given a complex legal question, the agent might break it down: first find applicable laws, then relevant case outcomes, then summarize everything. This saves lawyers hours of manual search while providing a comprehensive answer.

- Financial Analysis and Business Intelligence: In finance, an agentic RAG system might handle a query like “Analyze this quarter’s performance vs last year.” The agent could retrieve data from internal financial databases, cross-reference with market news, and call a tool to generate charts or compute metrics. The final output could be an actionable report with graphs and explanations, all compiled autonomously. For investment research, it might pull SEC filings, compare them to news articles, and write a summary of risks and opportunities.

- Academic and Scientific Research: Researchers can use agentic RAG assistants to gather literature and insights. If you ask such an agent “What are recent advances in agentic RAG?”, it could search academic papers, identify key findings, and even compile a literature review style answer. It can iterate by finding citations within those papers (a task a single-pass system can’t do easily). This helps in research synthesis – summarizing diverse sources with precision. Similarly, in data science, an agent could run queries on datasets, then use statistical tools to interpret the results, providing both the numbers and the narrative interpretation.

- Content Creation and Reports: In marketing or journalism, an agentic RAG could gather information on a topic from various sources (web articles, internal reports), then draft a piece that is both up-to-date and context-rich. It’s like having a researcher and writer in one, ensuring facts are checked against sources. The agent’s ability to use tools also means it could fetch real-time data (stock prices, social media trends) as part of the content generation.

Across those scenarios, the hallmark is dynamic information handling. The agent can navigate through large, unstructured data and bring out the specific answers needed, whether it’s summarizing a trove of documents or cross-analyzing different information streams. This is particularly useful in fields where information changes rapidly or is scattered in many places.

Education and Personalized Learning

Education is another area seeing the benefits of agentic RAG systems. Intelligent tutoring systems or study aids powered by agentic RAG can adapt to each learner and provide rich, context-aware assistance:

- Personalized Tutoring: An educational agent can answer student questions by pulling information from textbooks, lecture notes, or the web. But beyond a normal homework helper, an agentic RAG tutor can adjust its teaching strategy to the student. For example, if a student is struggling with a math problem, the agent might break the problem into steps, ask the student intermediate questions, retrieve hints from course materials, and provide feedback on where the student went wrong. This leads to interactive explanations and personalized feedback that a static system couldn’t manage.

- Curriculum and Content Customization: Such agents could generate practice problems or quizzes tailored to a student’s progress by retrieving relevant topics the student hasn’t mastered. They might also use external knowledge – for instance, incorporating a recent real-world event into an example for engagement. Because the agent can use tools, it might pull in an image or a diagram if a visual explanation would help (leveraging multimodal abilities).

- Research and Writing Assistance: For higher education, an agentic RAG can help students or educators summarize research papers, gather references on a topic, or even check a draft for factual accuracy by verifying against sources. It’s like having a knowledgeable assistant who can provide context and clarification on demand. If a student asks, “Explain the significance of a historical event,” the agent can compile perspectives from textbooks and reputable websites, then present an easy-to-understand synthesis – all while citing its sources.

- Teacher’s Aid: From the instructor side, agentic RAG might assist in grading (by comparing a student’s answer to known correct answers from material), or in preparing lesson plans (retrieving examples and analogies to include in a lecture). Its ability to search across many documents can surface connections between topics that help in interdisciplinary teaching.

In summary, adaptive learning is the key advantage here. The agentic RAG system can respond to the learner’s needs in real time, drawing on educational content and adjusting the approach as a human tutor would. This personalization and autonomy in handling educational content make learning more engaging and effective.

Benefits of Agentic RAG Systems

Agentic RAG systems offer several compelling benefits over traditional AI assistants and simpler RAG implementations. Below are some of the main benefits.

Increased Autonomy

Agentic RAG systems can operate with much less hand-holding.

An agent with RAG capabilities doesn’t wait for a perfectly crafted prompt or a user’s step-by-step instructions – it figures out the steps on its own.

That autonomy means the AI can handle objectives like “research this topic and give me a summary” by planning the queries and actions required.

For users, it feels more like interacting with an expert assistant rather than a search engine. The agent can determine when to use which tool, when to stop searching, and how to combine information, all autonomously.

Dynamic Task Handling

Agentic RAG systems excel at multi-step and complex tasks. They dynamically break down queries, adapt to new information, and handle conditional workflows (e.g., “If I don’t find X, try Y next”). This is crucial for tasks like troubleshooting, planning, or analytical queries that involve several sub-tasks.

The agent’s ability to reason and iterate leads to more thorough and context-rich responses, especially for queries that are not straightforward. In practice, this means higher success rates on complex queries that would stump a one-shot system.

Personalized and Contextual Outputs

By maintaining memory and pulling user-specific data, agentic RAG systems produce answers that are tailored to the context and the user.

They can remember a user’s previous questions or preferences within a session (short-term memory) and even across sessions if designed to (long-term memory). This personalization manifests as more relevant answers – for example, an agent might provide different solutions to an engineer and a layperson asking the same question, adjusting the explanation based on their background.

It can also incorporate personal data (with permission) – like referring to “your last login on Jan 5” in a support query – making the interaction more customized and useful.

Up-to-Date Knowledge and Accuracy

Like any RAG system, agentic RAG keeps the AI’s knowledge base current by retrieving the latest information.

But beyond that, the agent’s iterative approach helps in improving accuracy. It can verify facts by cross-checking multiple sources or refine its search if initial results seem outdated or irrelevant. This reduces the chance of the AI giving wrong or outdated answers.

In fields where information changes quickly (news, finance, tech), this ability to continually update context is invaluable. Agents can also reduce hallucinations by grounding responses in retrieved evidence and even explicitly filtering out answers that aren’t supported by sources.

Broader Task Range (Tool Use & Multimodality)

With integrated tool use, agentic RAG systems can perform a variety of actions: database lookups, math calculations, API calls, even controlling other software. This means the AI isn’t limited to just Q&A or text generation – it can execute tasks on the user’s behalf.

For example, it could draft an email, schedule a meeting via a calendar API, or generate a visualization from data. Multimodal support allows these agents to interpret images, audio, or other data forms when needed.

In short, the AI becomes more of a general problem-solving agent than just a text bot.

Continuous Learning and Improvement

Some agentic RAG implementations incorporate feedback loops where the agent learns from its successes and failures. For instance, if an agent’s query didn’t yield a good answer, it can adjust its strategy next time.

Also, by logging reasoning chains and user feedback, developers can analyze and fine-tune the agent’s behavior over time. This leads to systems that improve with usage, gradually requiring less intervention to deliver quality results. Traditional AI assistants often lack this self-refinement aspect.

In essence, agentic RAG systems provide a more powerful, adaptive, and user-aware AI experience. Users get better answers with less effort in crafting queries, and the AI can handle a wider array of tasks end-to-end. These benefits are driving interest in agentic RAG for enterprise applications despite the added complexity.

Challenges and Considerations

Despite their advantages, agentic RAG systems also introduce new challenges and risks. It’s important to be aware of these when designing or deploying such systems.

System Reliability and Complexity

With many moving parts (multiple agents, tools, memory stores), there’s more that can go wrong. Agents might fail to complete a task or get stuck in loops if something unexpected happens.

Coordination in a multi-agent setup is complex – agents could conflict or overload shared resources. This complexity also means debugging is harder when the system misbehaves. Ensuring reliability often requires adding safeguards: timeouts, fallback strategies if an agent crashes, and thorough testing of different scenarios.

Vanilla RAG pipelines can be brittle, but agentic RAG adds even more points of failure if not carefully managed.

Hallucination Risks

While grounding via retrieval reduces hallucinations (the AI making up facts), it does not eliminate them entirely. An agent might misinterpret retrieved data or stitch together an answer that seems plausible but is wrong.

There’s also the risk of hallucinated tool use – e.g., the agent may attempt to call a tool in a way it’s not designed for. Advanced agentic RAG designs use techniques like reflection or self-checking (having the agent verify if its answer addresses the query) to mitigate this. Some systems implement a “critic” agent or step that reviews the answer for errors.

Nonetheless, constant vigilance is needed to ensure the AI isn’t confidently outputting false or misleading information.

Evaluation Difficulties

Measuring the performance of agentic RAG systems is non-trivial. Traditional QA systems can be evaluated with straightforward accuracy metrics on a fixed set of questions.

But agentic systems involve multi-step reasoning and tool usage – there’s a lack of specialized benchmarks to capture how well an agent is reasoning or when it should stop searching.

Developing evaluation methodologies that account for criteria like reasoning correctness, appropriate tool use, and collaboration between agents is an active area of research. For now, many teams rely on extensive scenario testing or user feedback to gauge performance. This makes iterative development slower.

Additionally, demonstrating the ROI of a complex agentic RAG deployment to stakeholders can be challenging when success metrics aren’t clearly defined (e.g. how do you quantify the value of better reasoning?).

Ethical and Safety Considerations

Empowering AI with more autonomy raises ethical concerns. With agentic RAG, an AI may take actions (via tool use) that have real consequences – for example, an agent hooked up to an email API could send messages on a user’s behalf.

Ensuring the AI’s decisions are transparent and aligned with user intent is crucial. There’s also the risk of bias or misuse: if the underlying data has biases, an autonomous agent might propagate or even amplify them in its decision-making.

Privacy is another issue – these agents often need access to potentially sensitive data to be effective (emails, internal documents), so strict access control and data handling policies are needed.

Developers must implement guardrails: an agent should have constraints on what tools it can use and require user confirmation for high-impact actions.

Human oversight (a “human in the loop”) is often recommended for critical tasks, so the agent doesn’t become an unchecked decision-maker. We like to have a human in the loop wherever possible with the projects that we develop.

In summary, accountability, transparency, and control are key – one should always be able to trace how an agent arrived at a decision and override it if necessary.

Resource and Cost Overheads

Agentic RAG systems can be heavy on computation and expensive. Each additional agent step may call an LLM (which costs tokens/time) or hit a database.

A multi-step query might incur multiple round-trips, increasing latency and API costs. There’s also the overhead of maintaining multiple knowledge sources and tools integrations. Caching and optimization strategies (like reusing results, limiting the depth of reasoning loops) are often needed to keep systems responsive and cost-effective.

Organizations have to balance the improved capabilities with the infrastructure investment required.

Despite those challenges, none are insurmountable. Active research and engineering efforts are underway to enhance agent reliability (through better planning algorithms), reduce hallucinations (via verification steps and hybrid human-AI checks), create evaluation standards, and build ethical guidelines for autonomous AI.

Anyone implementing an agentic RAG should proceed with careful design and testing, but the potential rewards – in terms of AI usefulness and user satisfaction – can make it well worth the effort.

Agentic AI is reshaping how modern companies operate — don’t fall behind.

If you’re exploring agentic RAG systems or autonomous AI workflows, we can guide you through architecture, integration, and deployment.

Request a free consultation and see how AI can transform your operations.

Frequently Asked Questions

How do agentic RAG systems work?

Agentic RAG systems work by incorporating one or more AI agents into the RAG pipeline to manage the retrieval and reasoning process dynamically. Instead of a fixed “query -> retrieve -> answer” sequence, an agentic RAG uses a loop of planning, acting, and observing.

Here’s a simplified view: When a user asks a question, the agent analyzes the query and plans a strategy – for example, deciding which knowledge base or tool to query first. The agent then performs a retrieval action, such as searching a vector database or calling an API. It observes the results, and if the information is insufficient or leads to new questions, the agent will refine the query or use another tool.

That cycle repeats, with the agent possibly breaking the problem into sub-tasks or consulting multiple sources, until it has enough context to generate a good answer. Finally, the agent uses the LLM to produce a response, often combining information from all the retrieved snippets.

In essence, the agent acts as an orchestrator: it routes queries to the right sources, gathers and filters information, and only stops once the task (answering the user’s request) is completed satisfactorily. This is in contrast to traditional systems that retrieve once and don’t self-adjust. Some agentic RAG setups use multiple agents (a multi-agent RAG), where different agents handle different parts of the task (one might gather data while another formulates the answer, for instance).

The end result is an AI system that works in stages and can intelligently decide how to get to an answer rather than doing it in one shot.

Are agentic RAGs better than traditional AI assistants?

“Better” depends on the context – agentic RAGs are more powerful and flexible, but they’re not always the right choice for every situation.

Compared to a traditional AI assistant (like a standard chatbot or a basic RAG system), an agentic RAG can handle more complex queries, use real-time data, and provide more tailored responses, which often means a better outcome for difficult or multi-faceted tasks.

For example, if you need an AI to research and compile information across various sources, an agentic RAG will vastly outperform a traditional assistant. However, these benefits come with trade-offs. Agentic RAG systems usually require more computational resources and can be slower – because the AI is doing more work (multiple retrievals, reasoning steps).

Simple queries that a normal assistant could answer in one step might take longer with an agentic approach, as the agent might overanalyze or use unnecessary steps. There’s also the aspect of reliability: traditional assistants have fewer failure points (since they don’t loop or use many tools), whereas an agentic RAG might occasionally get confused by a complicated tool use sequence and fail to complete the task.

Additionally, more agents and more API calls mean higher cost (for cloud API usage or maintenance). In scenarios like real-time customer chat, a three-second delay for the agent to think could be a deal-breaker if a simpler bot could respond in one second.

In summary, agentic RAGs are “better” for complex, high-value tasks that need their advanced capabilities, but for straightforward Q&A or when speed is crucial, a traditional AI assistant might suffice.

Many experts suggest using agentic RAG only when necessary – if a regular RAG or assistant meets the requirements, its simplicity can be an advantage. It’s about using the right tool for the job.

What are some popular frameworks or tools for building agentic RAG systems?

The ecosystem for agentic RAG is rapidly growing. A few notable frameworks and tools have emerged to help developers build these systems:

- LangChain: An open-source framework that provides building blocks for LLM applications, including agents. LangChain makes it easier to define chains of prompts, tool usage, and memory. It has modules specifically for creating agent behaviors (like the ReAct framework) and integrating with data stores. Many developers use LangChain as a backbone for agentic RAG because it supports connecting to databases, web search APIs, calculators, and more via simple abstractions.

- LlamaIndex (formerly GPT Index): A framework focused on connecting LLMs with external data sources. It allows you to index various data (documents, PDFs, SQL databases) and then query them with LLMs. For agentic RAG, LlamaIndex can be used in conjunction with an agent to handle the retrieval part – it provides efficient ways to do the knowledge lookup, which the agent can then reason over.

- LangGraph: An extension of LangChain aimed at more complex agent orchestration. It lets developers design agents and their decision flows as a graph of nodes (where each node could be a retrieval or a reasoning step, etc.). LangGraph is useful for visualizing and controlling multi-agent workflows. It’s mentioned often in the context of agentic RAG for creating structured reasoning paths and has support for advanced patterns (like plan-and-execute agents).

- Microsoft’s Semantic Kernel: An SDK from Microsoft that allows developers to create AI plugins and orchestrate LLMs with external skills (functions). It’s not specific to RAG, but it provides tools to manage prompts, memory, and function calls in a way that’s helpful for building agent-like behavior. For instance, you can define a sequence where the AI calls a “SearchDocuments” function, then uses the result, etc. It’s gaining traction for enterprise applications of agentic AI due to its integration with .NET and Azure services.

- IBM watsonx Orchestrate: IBM’s offering in this space, which provides a platform for building AI agents that can interact with business tools and data (part of the IBM Watson AI suite). It likely abstracts a lot of the complexity and provides governance (important for enterprise use of agentic RAG). IBM’s documentation also references AutoGen, an open-source framework for multi-agent systems, and mentions their work on orchestration and evaluation for agents.

- Open-Source Agent Projects: There are also well-known open-source “autonomous agent” projects like AutoGPT, BabyAGI, and MetaGPT. These emerged from the community as experiments to let LLMs operate autonomously. While not all are specifically focused on retrieval augmentation, many incorporate RAG techniques (for example, AutoGPT can search the web and gather information). They serve as inspiration and sometimes provide utilities for building agentic systems. For a more RAG-centric approach, projects like LangChain’s Agents and PromptChainer are often used.

- NVIDIA NeMo Toolkit: NVIDIA has the NeMo framework and recently an AI Workbench (AI-Q) blueprint that includes agentic RAG components. They offer models like NeMo Retrieval and NeMo Agent which developers can use to create agents that leverage dynamic knowledge. This is more specialized, but worth noting for those in the NVIDIA ecosystem.

On the infrastructure side, you’ll also use vector databases (like Weaviate, Pinecone, Redis Vector, Milvus) to store and retrieve embeddings, and perhaps orchestration tools like Airflow or fastAPI to glue everything together in a production environment.

Each framework has its strengths: LangChain/LangGraph are great for Python-centric development and quick prototyping, Semantic Kernel is good for enterprise integration, and so on.

Many solutions end up combining pieces – for example, using LangChain within an Azure Function with Semantic Kernel, or using LlamaIndex for data ingestion and LangChain for the agent loop.

It’s an evolving landscape, so it’s wise to keep an eye on community forums and research publications for the latest and greatest tools.

How do agentic RAG systems handle mistakes or wrong answers?

A well-designed agentic RAG system will have mechanisms to catch and correct mistakes, though it’s not foolproof. There are a couple of ways these systems handle errors or inaccuracies (hallucinations).

First, many incorporate a form of self-reflection or self-critique – after generating an answer, the agent can assess “Did I actually answer the user’s question well, and is my answer supported by the data?”. If it detects that something is off (perhaps via a secondary check or an evaluator agent), it can loop back to retrieve more information or adjust the answer (this is sometimes called Self-RAG or a self-correction loop).

Second, agentic RAG can use redundancy and verification: for important queries, the agent might retrieve from multiple independent sources. If two sources disagree, the agent can flag uncertainty or seek a third source to break the tie.

Some systems also allow a human in the loop for validation. For example, an agent could draft an answer and then ask a human operator for approval if a confidence score is low. In tools used for customer support, agents may automatically escalate to a human when they’re not confident.

Additionally, to prevent known pitfalls, developers set constraints on the agent’s behavior (for instance, not allowing the agent to answer medical or legal questions without quoting sources, to avoid unsupported claims). Despite these measures, agentic RAG is not perfect – mistakes can still happen, especially if the knowledge base has gaps or if the AI model itself has learned some inaccuracies.

The key is that with an agentic setup, the system has multiple opportunities to catch an error (during retrieval, during reflection, etc.), whereas a single-pass system has only one shot. Over time, as these agents learn and their evaluation benchmarks improve, we expect them to become more and more reliable at delivering correct answers.

What’s the future outlook for agentic RAG systems?

Agentic RAG is a cutting-edge development at the intersection of retrieval-augmented AI and autonomous agents, and its future looks promising. We’re likely to see more sophisticated multi-agent systems where different agents have specialized roles (one might handle visual data, another focuses on reasoning, another on interaction). This specialization could make them even more powerful and efficient.

There’s active research into making these systems faster and more cost-efficient, for example by improving how agents coordinate (to avoid redundant steps) and how they cache intermediate results.

On the academic front, the community is working on better evaluation methods and benchmarks for agentic AI, which will help standardize improvements and safety checks. We also anticipate tighter integration with domain-specific tools: imagine agentic RAGs embedded in healthcare decision support systems, engineering design software, or scientific research platforms, where they can fetch data and also perform domain-specific computations.

As organizations adopt these systems, there will be a strong emphasis on governance and ethics – expect to see guidelines and perhaps regulations on the use of autonomous AI in sensitive areas. The concept of a “personal AI agent” might become reality, where individuals have their own agentic RAG that learns their needs and preferences over time, acting as a highly personalized assistant.

In summary, the future will likely bring more powerful, more trusted agentic RAG systems that extend AI’s usefulness into ever more complex tasks, while the lessons learned today about reliability and ethics will shape their responsible deployment. It’s an exciting frontier in AI, merging knowledge retrieval with autonomous reasoning – a development that could redefine how we interact with technology in the years to come.